Managed Private Cloud Single Tenant

MPC Single Tenant (MPC ST) is the dedicated-infrastructure service variant of Managed Private Cloud (MPC) for customers who require maximum robustness, isolation, and security. It provides a fully dedicated environment within an Equinix IBX data center.

MPC ST is a single‑tenant compute environment deployed as a cluster consisting of multiple hosts, providing dedicated compute, storage, and networking resources for a single customer, eliminating the shared-tenancy model, and supporting workloads or applications that require consistent, isolated resources. Compute, storage, and networking resources are managed through the MPC Operational Console.

MPC ST Compute Cluster

The MPC ST cluster includes seven or more hosts of the same type. The cluster type determines the availability and SLA characteristics of the service. The following cluster types are supported:

- Single cluster, single datacenter

- Multi‑cluster, multi‑datacenter

- Single cluster, dual datacenter (stretched cluster)

A single cluster, single datacenter configuration is suitable for applications requiring high availability. Disaster recovery for this cluster type is primarily achieved through backup solutions. A multi‑cluster, multi‑datacenter configuration is suitable when applications support built‑in failover mechanisms and can recover from failures.

Host

An MPC ST environment includes a minimum of 7 hosts, where 6 hosts are used for workload execution and 1 host is reserved as spare capacity (N+1).

MPC ST provides a catalog of host types defined by CPU, core count, RAM, and storage.

Cluster sizing

Cluster sizing is based on required compute capacity and availability. A cluster consists of net consumable capacity in number of hosts (N) and includes spare capacity for availability, recovery, and maintenance. Spare capacity requirements depend on cluster size.

- Minimum cluster size is seven (7) hosts (N+1)

- Clusters larger than fifteen (15) hosts require a second spare host (N+2)

Cluster Sizes and Net Capacity

Spare server capacity is reserved to maintain availability. Spare nodes cannot be used while all active nodes remain operational, so their capacity is excluded from available capacity calculations. The overview below shows example cluster sizes, including minimum and maximum sizes, and the associated usable net capacity in CPU cores and GB RAM.

| MPC ST CLUSTER SIZE | HYPERVISOR SERVER MODEL | # SPARE SERVERS | # AVAILABLE CPU CORES | # AVAILABLE GB RAM |

|---|---|---|---|---|

| 7 (6+1) minimum | 16C, 512 GB RAM | 1 | 60 | 1380 |

| 32C, 512 GB RAM | 1 | 90 | 1380 | |

| 32C, 1024 GB RAM | 1 | 90 | 2850 | |

| 64C, 1024 GB RAM | 1 | 180 | 2850 | |

| 64C, 2048 GB RAM | 1 | 180 | 5700 | |

| 15 (14+1) | 16C, 512 GB RAM | 1 | 210 | 6440 |

| 32C, 512 GB RAM | 1 | 420 | 6440 | |

| 32C, 1024 GB RAM | 1 | 420 | 13300 | |

| 64C, 1024 GB RAM | 1 | 840 | 13300 | |

| 64C, 2048 GB RAM | 1 | 840 | 26600 | |

| 17 (15+2) | 16C, 512 GB RAM | 2 | 225 | 6900 |

| 32C, 512 GB RAM | 2 | 450 | 6900 | |

| 32C, 1024 GB RAM | 2 | 450 | 13800 | |

| 64C, 1024 GB RAM | 2 | 900 | 13800 | |

| 64C, 2048 GB RAM | 2 | 900 | 27600 |

Organizational Virtual Data Centers (OVDC)

Customers can define one or more OVDCs in an MPC ST cluster to support flexible and scalable resource organization. An OVDC provides a logical environment with vCPU, RAM, storage resources, and network capabilities on which customers can define VMs.

vCPU performance guarantee

Each OVDC has a minimum guaranteed vCPU performance reservation. Only one performance guarantee can be applied per OVDC. The available options are:

| vCPU Guarantee | Use |

|---|---|

| FULL | For every 1 vCPU, a full core is reserved |

| HIGH | For every 2 vCPU, a full core is reserved |

| OPTIMIZED | For every 8 vCPU, a full core is reserved |

Storage

In MPC ST, storage is included in the host price. Customers can divide and distribute the total storage capacity across multiple OVDCs. Each OVDC can be assigned up to two storage policies. The table below lists the available storage policies.

| Storage Policy | Use |

|---|---|

| ULTRA PERFORMANCE | For logs and other workloads with need for the highest IOPS |

| HIGH PERFORMANCE | Database RDS/SBC, VDI low-latency beneficial workloads |

| PERFORMANCE | Generic VMs, app / web services, high-performance file services / object storage |

Customers can combine multiple OVDCs in different variants and sizes for different purposes to optimize use of the available compute, storage capacity, and performance of the cluster. Security policies can be configured on virtual networks, and OVDCs can be interconnected.

Features of MPC Storage

- Storage capacity is allocated per policy per OVDC

- Up to two different storage policies can be assigned per OVDC

- MPC storage supports encryption at rest

- The recommended virtual disk size per VM is between 40 GB and 8 TB

Expected Net storage capacity

The available disk space is not equal to the usable storage capacity due to deduplication, compression, and capacity reserved for protection and management. Based on disk size, number of disks, and the number of servers in the cluster, minimum and expected usable capacity can be determined. During pre‑sales, the required capacity is determined based on customer requirements.

Storage consumption per policy is measured as allocated capacity for:

- VM disks

- VM swap files

- Snapshots

- Files in the Library (vApp templates and ISOs)

VMware Licensing

MPC ST hosts include VMware Cloud Foundation (VCF) licensing as part of the host price.

Compute Purchase Units

Compute purchase units for MPC ST are based on the available host types.

The different kind of purchase units for MPC Single Tenant are described in the table below.

| Purchase Unit | Host Type | Billing Type | Description |

|---|---|---|---|

| AHM-16L05V4#4 | Generic Host | Baseline | 16C, 512GB RAM, 4×3.84TB NVME |

| AHM-16L05V6#4 | Generic Host | Baseline | 16C, 512GB RAM, 6×3.84TB NVME |

| AHM-32L05V8#4 | Generic Host | Baseline | 32C, 512GB RAM, 8×3.84TB NVME |

| AHM-32L05V6#8 | Generic Host | Baseline | 32C, 512GB RAM, 6×7.68TB NVME |

| AHM-32L05V8#8 | Generic Host | Baseline | 32C, 512GB RAM, 8×7.68TB NVME |

| AHM-32L05V6#15 | Generic Host | Baseline | 32C, 512GB RAM, 6×3.68TB NVME |

| AHM-32L10V8#4 | Generic Host | Baseline | 32C, 1024GB RAM, 4×15.36TB NVME |

| AHM-32L10V6#8 | Generic Host | Baseline | 32C, 1024GB RAM, 6×7.68TB NVME |

| AHM-32L10V8#8 | Generic Host | Baseline | 32C, 1024GB RAM, 8×7.68TB NVME |

| AHM-32L10V6#15 | Generic Host | Baseline | 32C, 1024GB RAM, 6×15.36TB NVME |

| AHM-64L10V8#8 | Generic Host | Baseline | 64C, 1024GB RAM, 8×7.68TB NVME |

| AHM-64L10V6#15 | Generic Host | Baseline | 64C, 1024GB RAM, 6×15.36TB NVME |

| AHM-64L20V8#8 | Generic Host | Baseline | 64C, 2048GB RAM, 8×7.68TB NVME |

| AHM-64L20V6#15 | Generic Host | Baseline | 64C, 2048GB RAM, 6×15.36TB NVME |

| Note: The cluster hosts are committed for the full contracting period. |

Using MPC ST

- Select multiple hosts and clusters that meet resource requirements, provided that each cluster contains only one host type.

- Mix multiple vCPU performance guarantees within a single MPC Single Tenant cluster by creating additional Organizational Virtual Data Centers (OVDCs).

- Use virtual machines of any size within host limits for compute resources; recommended VM sizing guidelines apply, and allocating resources beyond recommended sizes does not necessarily improve performance.

- Follow the maximum recommended VM size of 8 vCPU and 64 GB RAM for MPC ST.

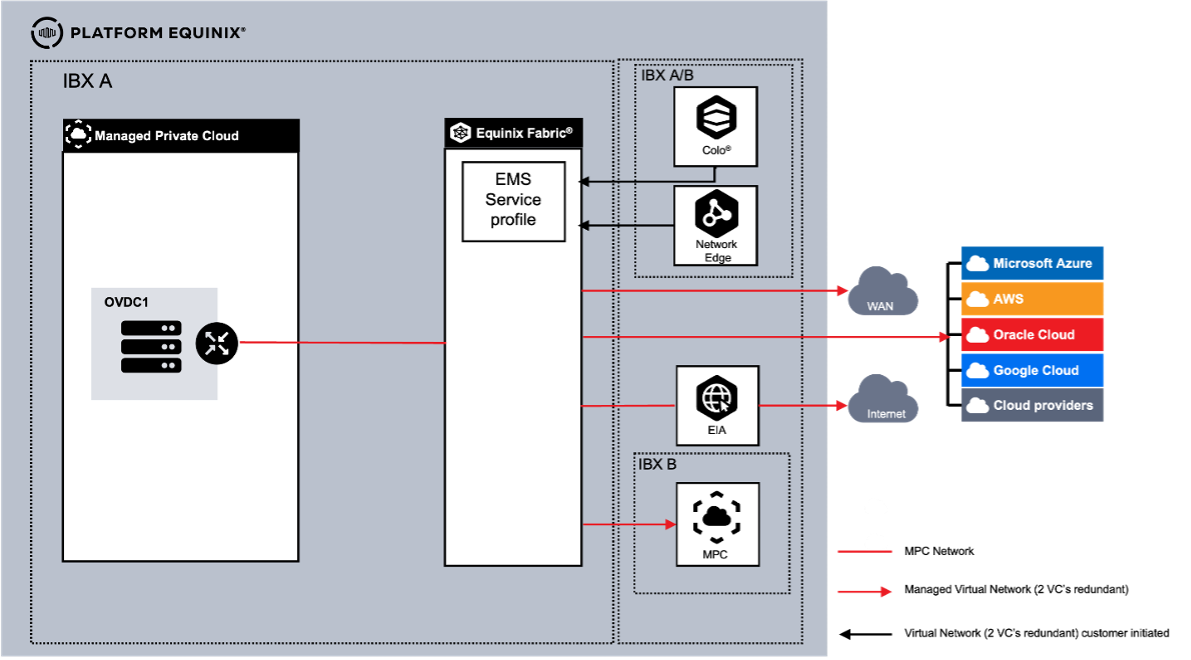

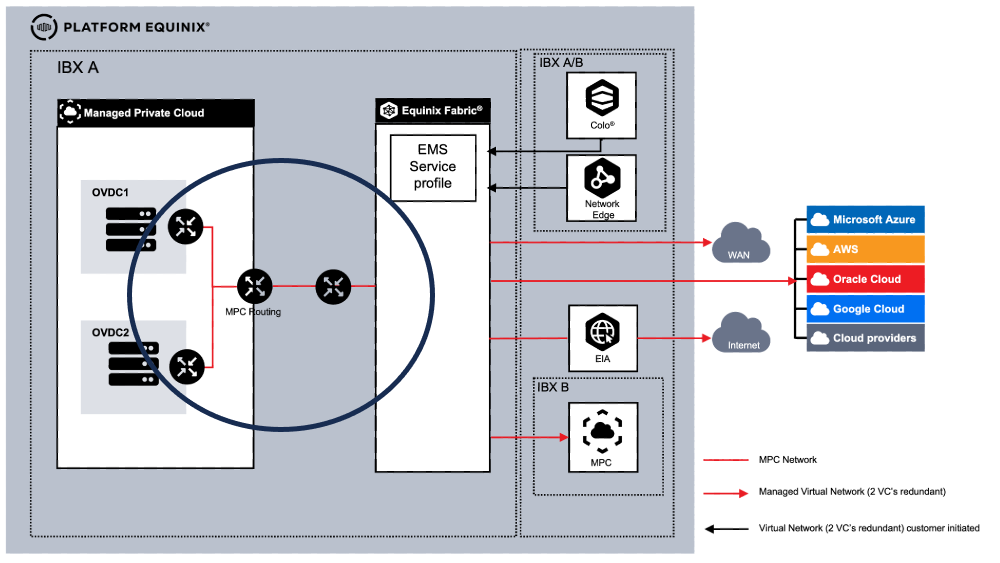

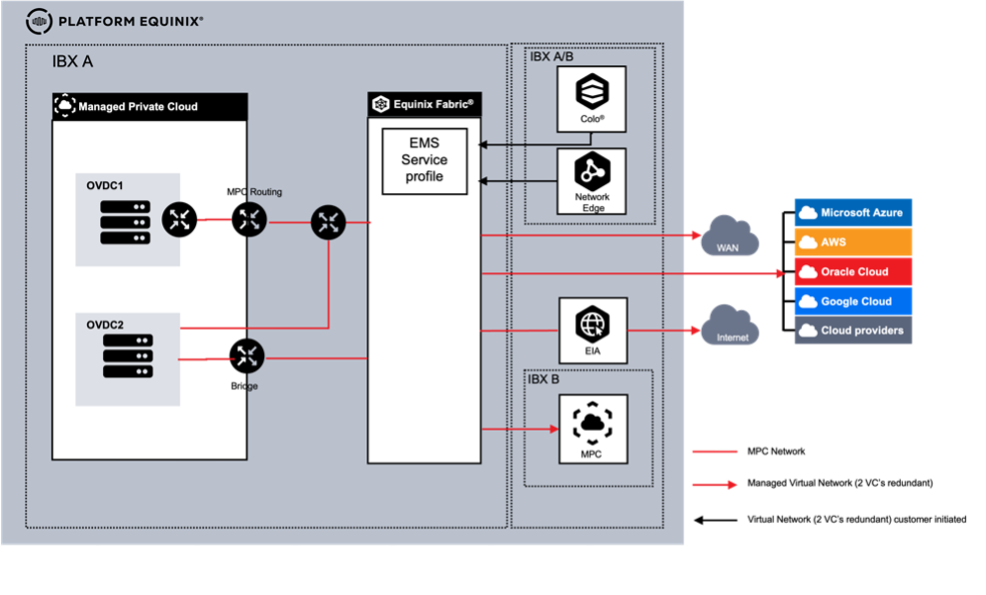

MPC Connectivity

MPC can be connected to the following external environments:

- Equinix Colocation

- Equinix Network Edge

- WAN providers

- Cloud Service Providers (CSP)

- MPC environments in another metro

- Equinix Internet Access (EIA) via Equinix Fabric

Connectivity is supported only through Equinix Fabric using Virtual Circuits. Other connectivity options are not supported.

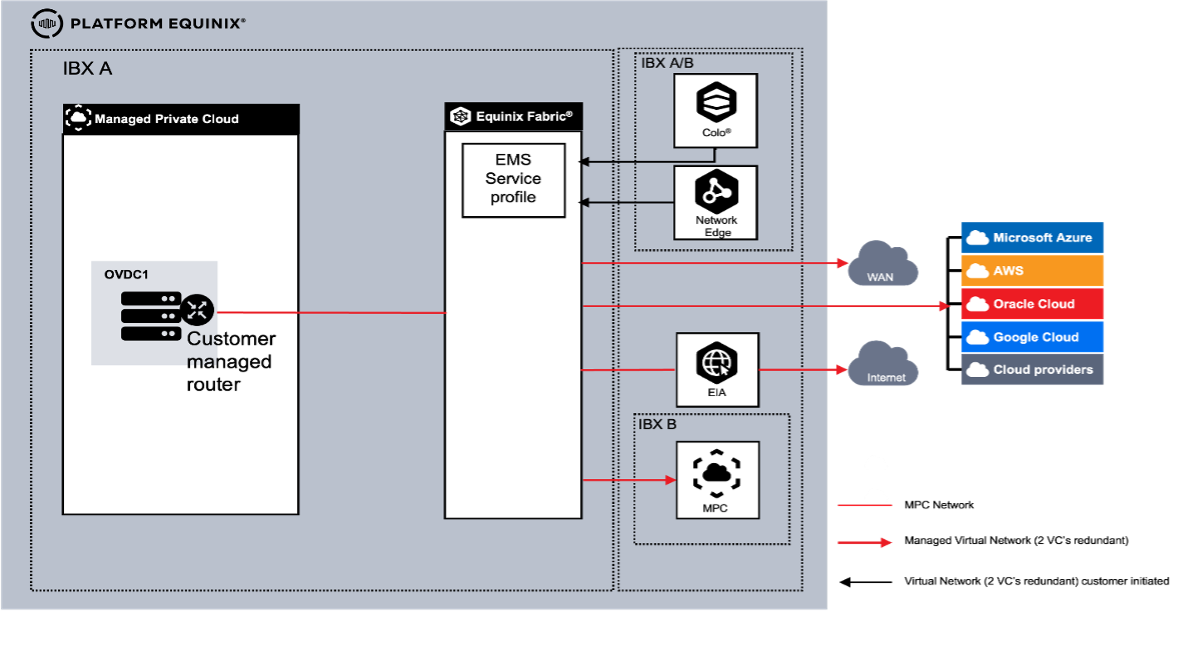

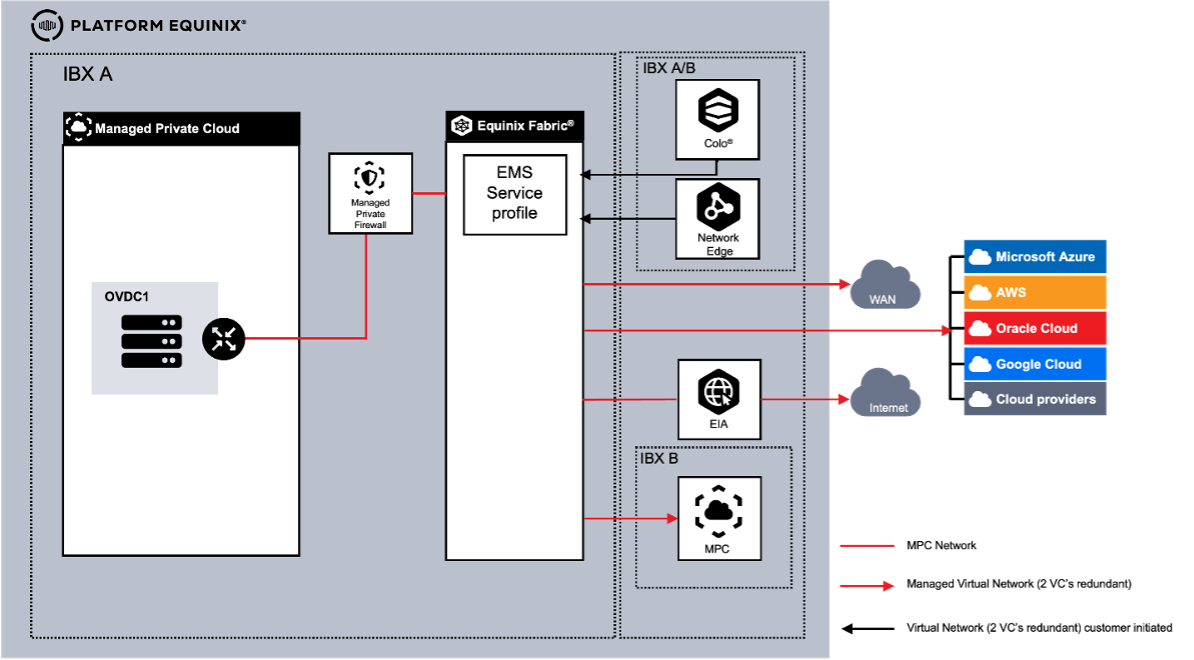

Connectivity Type

Network requirements determine how the customer networks connect to an MPC Organizational Virtual Data Center (OVDC). The following connectivity types are available:

- Routed - Routing in MPC provided by Equinix

- Routed Joined - Routing option using a shared router across multiple OVDCs

- Customer Routed - Routing provided by a customer-managed virtual routing appliance within the OVDC

- Managed Private Firewall (MPF) - Routing provided through the Managed Private Firewall service

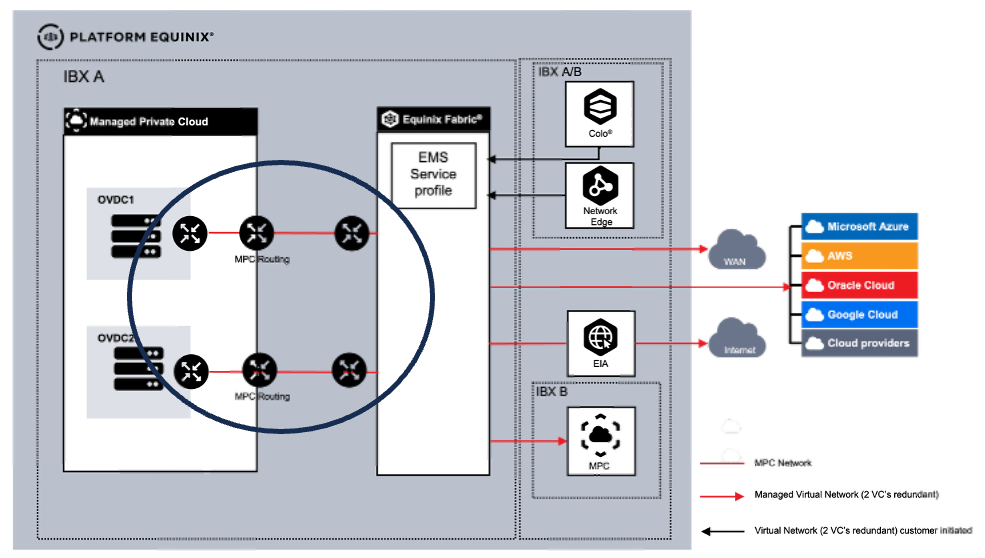

Multiple OVDCs

Each OVDC has a single connectivity type. When multiple OVDCs are used, supported combinations of connectivity types are possible.

Connectivity Routed

This connectivity type provides layer‑3 connectivity to the MPC OVDC. This option includes a built‑in routing engine configurable through the Operational Console. Each MPC internal routed network created is automatically part of the customer routing domain.

Connectivity Customer Routed

This option uses a self‑provided, installed, and managed virtual routing appliance (VM) within MPC as the routing engine. All ordered external networks are made available in the Operational Console as external networks provided by Equinix. Additional external networks can be ordered separately. External networks must be connected to the virtual routing appliance.

MPC internal isolated networks must be connected to the routing appliance. The customer is responsible for building layer‑3 (BGP) redundancy using multiple Virtual Connections.

In Customer Routed, VLANs can be trunked for north‑south traffic, from outside MPC to MPC. For east‑west traffic inside MPC, trunking is not supported.

- Routed internal networks are not available with this option. Only isolated internal networks created through self‑service can be used to connect VMs to the virtual routing appliance.

- External networks are required for connecting the virtual routing appliance to external connectivity. Two Virtual Circuits (VCs) are required per connection for redundancy.

- Although the MPC platform provides high availability, deployment of a high‑availability configuration using two virtual routing appliances is recommended.

- Use of VMware tools on routing appliances and VMs is recommended to support graceful management.

Connectivity Managed Private Firewall

When using the Managed Private Firewall (MPF) as an additional service for security, including routing and logging, all external connectivity is terminated at MPF. MPF is connected to the built-in MPC routing. In this combination of MPF and MPC routing, north-south traffic is processed first by MPF and then by MPC. MPC also provides east-west routing.

This option provides a built-in routing setup consisting of two elements.

- MPF routing, configured by Equinix

- MPC routing, configurable via the Operator Console

Each MPC internal routed network created is automatically part of the customer routing domain. Internal isolated networks are not.

Networking with Multiple OVDCs

When using multiple OVDCs in a Metro, customers can use different connectivity type combinations. As the connectivity type is related to the OVDC, each scenario has different capabilities.

When using multiple OVDCs in one Metro, a customer can choose to use a dedicated gateway per OVDC or use the same gateway instance for both OVDCs (routed joined).

Multiple OVDCs with Connectivity Routed and Customer Routed

Multiple OVDCs with Connectivity Routed and Customer Routed

When using multiple OVDCs in one Metro, a customer can choose a combination of Routed for one OVDC and Customer Routed for the other. The customer has two implementation options:

- The OVDCs are connected

- The OVDCs are not connected

When the OVDCs are connected, a VLAN exists between the Customer Routed gateway and the Routed gateway. In this case, networks can be created between the two OVDCs. When the OVDCs are not connected, each OVDC operates as a standalone environment.

Virtual Connection Options

| Connection | # | Type | Description |

|---|---|---|---|

| Equinix Colocation | 1 | Self‑Service VC | Customer‑created and managed connection. Via Equinix Fabric Portal using an EMS Service Profile / Service token. |

| Equinix Network Edge | 2 | Self‑Service VC | Customer‑created and managed connection. Via Equinix Fabric Portal using an EMS Service Profile / Service token. |

| WAN Providers | 3 | Managed VC | Equinix‑created and managed connection. Part of the MPC order. |

| Cloud Service Providers (CSP) | 4 | Managed VC | Equinix‑created and managed connection. Part of the MPC order. |

| MPC environment in another Metro | 5 | Managed VC | Equinix‑created and managed connection. Part of the MPC order. |

| Equinix Internet Access (EIA) via Equinix Fabric | 6 | Managed Internet Access | Equinix‑created and managed connection. Part of the MPC order. |

With a self-service VC, customers initiate VCs through the Equinix Fabric portal. Equinix Managed Solutions contacts the customer to configure the connection on the MPC side. Each connection requires a pair of VCs to be ordered.

With a managed VC, the VC is part of an MPC order in which Equinix initiates the VC configuration. During fulfillment, Equinix contacts the customer to collect the parameters required to configure the VCs.

Each connection requires two virtual circuits for redundancy, for both Self-Service and Managed VC.

Internet Access

If internet access is required as part of the MPC environment, Managed Internet Access must be ordered. Managed Internet Access uses Fabric Internet Access (EIA) and is configured with fixed bandwidth, with no bursting options. EIA supports up to 10 Gbps bandwidth to MPC. IP address space must be ordered as a separate item.

For internet access, a customer can alternatively contract a third-party service available on Equinix Fabric. In this case, the customer is responsible for contracting the internet service.

When using a third-party internet provider, two connections must be ordered for redundancy.

MPC Virtual Networking

The MPC platform provides virtual networking functions that can be configured through the MPC Operational Console. The table below lists the available functions and indicates whether they are included in the service or charged separately.

| Function | Charge Type | Routed and Managed Firewall | Customer Routed |

|---|---|---|---|

| Standard Firewall | Included | Y | N |

| Distributed or Advanced Firewall | Charged | Y | Y |

| Routing, IPv4 Static and Dynamic (BGP) | Included | Y | N |

| Routing IPv6 | Included | Y | N |

| NAT | Included | Y | N |

| DHCP | Included | Y | N |

| VPN IPSEC Layer‑3 Site‑to‑Site | Included | Y | N |

| Route Advertisement | Included | Y | N |

Internal Networks

Internal networks for an OVDC can be created through self‑service in the MPC Operational Console. The MPC Networking service supports up to 1000 internal networks per OVDC. Internal networks can also be configured across multiple OVDCs within the same Equinix IBX when the OVDC is configured with a datacenter group.

Two types of internal networks are available:

- Routed, allows VMs to connect to a network that uses the gateway as the router and provides access to WAN, colocation, CSPs, or the internet. Routed networks are available to all VMs and vApps within the OVDC.

- Isolated, these networks are not connected to networks with access to WAN, colocation, CSPs, or the internet, unless attached to a self-managed router.

Routed networks are available only for the connectivity type "Routed".

Datacenter Group

When multiple OVDCs are used, a datacenter group creates a unified network across the OVDCs, allowing the same network to be used in multiple OVDCs. A datacenter group is required to use the distributed firewall functionality. If a datacenter group is not available, a service request can be submitted to configure it.

Inter Site Networks

When using MPC in different metros, two MPC sites in different datacenters can be connected by requesting a Managed Virtual Circuit between the sites. The network configuration depends on the selected connectivity type. Connectivity between two datacenters is charged as a Managed Virtual Circuit.

Connectivity between more than two datacenters is supported, but requires a Fabric Cloud Router (FCR), Equinix Fabric IP-WAN, and two virtual connections between the MPC zone and the Fabric Cloud Router.

Ordering and configuring Fabric Cloud Router and IP-WAN is outside the scope of Managed Solutions.

Purchase Units Connectivity - Units per Single OVDC

| Purchase Item | UOM | Calculation Type | Description |

|---|---|---|---|

| Connectivity Type | ONCE | Baseline | Routing instance per OVDC: • Routed • Routed Joined • Customer Routed • Managed Firewall |

| Managed Virtual Circuit | VC | Baseline | Available bandwidth: 10 / 50 / 200 / 500 Mbps or 1, 2, 5, 10 Gbps Two (2) VCs needed per connection for redundancy |

| Managed Internet Access | Each | Baseline | Internet access available in 10 / 50 / 100 / 200 / 500 Mbps and 1, 2, 5, and 10 Gbps Managed Virtual Circuits are included in Managed Internet Access |

| Allocated IP‑space | block | Baseline | Supported IPv4 /24 to /29 and IPv6 Mandatory when Managed Internet Access is procured |

| Distributed Firewall (DFW) | Per Core | Baseline / Overage | Additional functionality to offer micro‑segmentation |

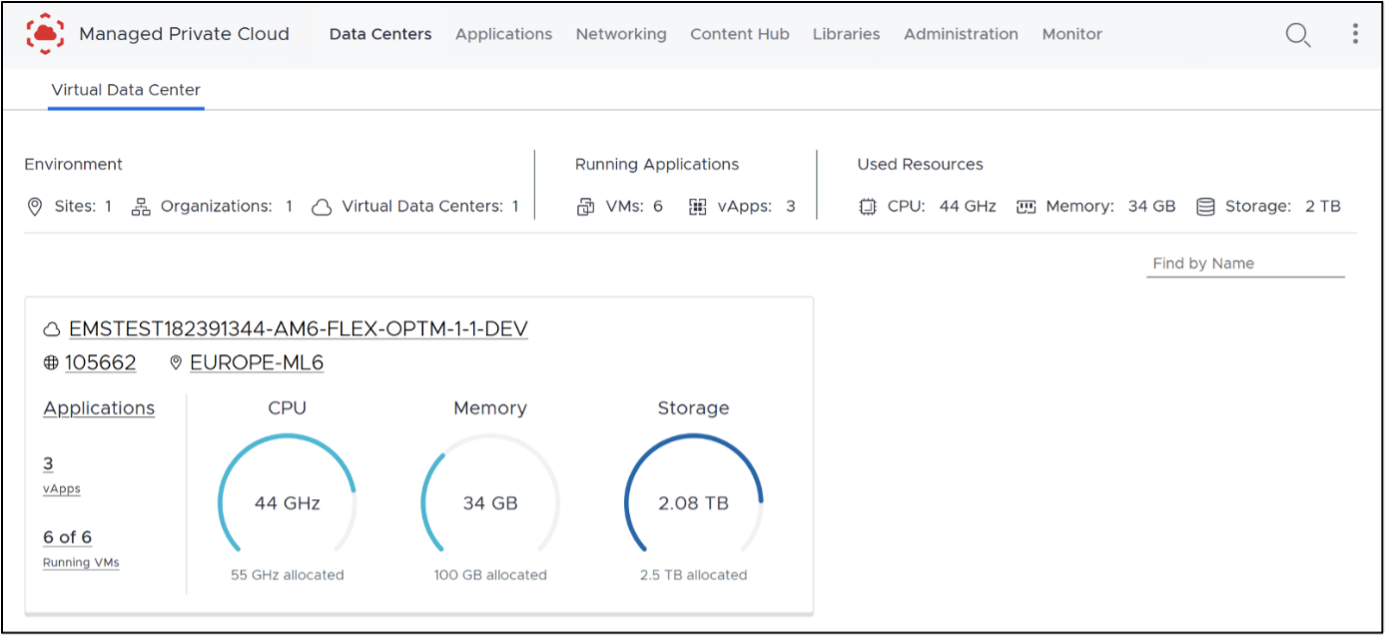

MPC Operational Console

The MPC Operational Console provides automation tooling and an API to manage MPC resources, including the following:

- Manage OVDCs across multiple Equinix data centers

- Create, import, and manage VMs and vApps

- Size VMs (scale up and down)

- Create VM snapshots

- Access VM consoles

- View performance statistics

- Create and populate the Library with ISO and OVA files

- Access the MPC self-service portal and VM consoles directly via web browser without VPN requirements

- Extensive options for scripting and automation (API)

- Separate or group VMs for availability or performance

- Manage firewall rules and microsegmentation

- Create and manage static routing rules for IPv4

- Create and manage static routing rules for IPv6

- Create and manage NAT, DHCP, and VPN (Layer 2)

- Create and manage IPsec VPN Layer 3 site-to-site tunnels

- Create and manage route advertisements (VRF)

Accessing MPC ST

To access Managed Private Cloud (MPC), go to the Customer Portal. From there, you can access the following:

- Managed Solutions Portal (MSP) – used to raise tickets, submit service requests, and view MPC usage insights.

- Operational Console – used to manage and operate MPC resources.

Regions

The Operational Console provides access to MPC resources within a specific region. MPC is available in four regions: South America, North America, Europe, and Asia.

Each MPC instance is associated with a region. When an instance exists in a region, you can open its corresponding Operational Console by selecting the regional option from the Managed Solutions area of the Customer Portal.

After you sign in to the appropriate regional console, your access to resources is organized by Organization and Organizational Virtual Datacenters (OVDCs).

Organization

An Organization (Org) represents the customer within the Operational Console. The Org can be viewed as a container for all MPC resources in that region, including virtual data centers, users, and content libraries. The Org name is required when signing in to the MPC Operational Console.

Organizational Virtual Datacenter (OVDC)

An Organizational Virtual Datacenter (OVDC) groups the compute, storage, and networking resources you use to run workloads. A single Org can contain multiple OVDCs, which may differ by IBX location (for example, Amsterdam, London, or Ashburn) or by compute performance profile. If you require additional capacity or an additional OVDC, contact your assigned Service Delivery Manager or Account Manager to request it.

Tenant Roles

The following Tenant Roles apply only to the Managed Private Cloud Single Tenant and Managed Private Cloud FLEX variants.

Tenant roles define the permissions and actions available to users within an MPC tenant. Roles are assigned to users after they are added through the Customer Portal. Roles determine what actions users can perform in the Operational Console and which API capabilities are available to them.

During the initial deployment of a new MPC environment, Equinix creates the first customer administrator account as part of the onboarding process. This account is created using the customer-provided name and email address, and is automatically assigned the Tenant Admin role, which provides full access to the MPC Operational Console, including tenant-level administration and configuration capabilities.

The following roles are supported:

Tenant Admin - The Tenant Admin role provides full administrative access to an MPC tenant. Users assigned this role can manage tenant configuration, user access, and automation capabilities across the environment. Tenant Admin permissions include:

- Access the VM console

- Create and delete snapshots

- Create and delete personal API tokens

- Create, modify, and delete organization-wide service accounts

- Create, modify, and delete virtual machines within the tenant scope

- Create, modify, and delete vApps within the tenant scope

- Create, modify, and delete networks within the tenant scope

- Create, modify, and delete content libraries and library content within the tenant scope

- Configure edge gateways within the tenant scope

- Configure affinity rules

- View tasks and event logs within the tenant scope

Tenant User - The Tenant User role allows users to create and manage workloads and selected resources within an MPC tenant, without access to tenant-wide administrative settings. Tenant User permissions include:

- Access the virtual machines console

- Create and delete snapshots

- Create and delete personal API tokens

- Start and stop virtual machines within the tenant scope

- Start and stop vApps within the tenant scope

- Update VM tools within the tenant scope

- View tasks and event logs within the tenant scope

Tenant Viewer - The Tenant Viewer role provides read-only access to tenant configuration and resource status. Users assigned this role can view the same resources and information available to the Tenant User role, with the following restrictions:

- No create, modify, or delete (CRUD) permissions for virtual machines or vApps

- No Console Access

Managing MPC ST

User Management

Users of the Operational Console are managed through the Customer Portal. See User and Password management. Once users are added, a Service Request must be submitted to assign the MPC tenant role to the user.

To use a different identity source, identity federation can be configured in the Customer Portal to integrate with an external identity provider. This enables users to sign in using their company credentials.

API Access

Access to the Managed Private Cloud (MPC) Operational Console API is intended for programmatic access and automation. Common automation tools include Terraform, Ansible, Python, and XML- or JSON-based API calls. Authentication to the API requires generating access tokens in the Operational Console. Two access methods are supported, each designed for a different use case.

| Method | Details |

|---|---|

| API tokens | • API access to the Operational Console on behalf of the Customer Portal or federated user accounts for individual programmatic access. • Can be configured by all Customer Portal or federated user accounts. |

| Service accounts | • API access to the Operational Console using standalone accounts intended for organization-wide automation and third-party tools or applications. • Can be configured only by users with the Tenant Admin role. • Can be assigned one of the supported roles. • Supports API access only. |

Terraform Automation

Terraform is an infrastructure-as-code tool that allows cloud and on-premises resources to be defined using human-readable configuration files. These files can be versioned, reused, and shared, enabling a consistent workflow for provisioning and managing infrastructure throughout its lifecycle.

Terraform can be used to manage low-level resources such as compute, storage, and networking. Most Operational Console functionality is available through the API or Terraform. For more information, see the Terraform provider documentation.

API tokens

API tokens can be created by the Customer Portal or federated user account in the Operational Console and are used for personal programmatic access. Here's how to create an API token:

- Sign in to the Operational Console. Open User preferences from the top-right menu.

- Go to the API Tokens section and select New.

- Enter a name for the token and select Create.

- Copy and store the generated token in a secure location. The token is displayed only once and cannot be viewed again after closing this screen. If the token is lost, a new one must be generated.

- After creation, the token appears in the API Tokens list and can be revoked when no longer needed.

Service Accounts

Service accounts can be created, viewed, and deleted only by users with the Tenant Admin role due to the sensitive nature of these accounts. Here's how to create a service account:

A service account is the owner of any objects it creates in the Operational Console.

- Sign in to the Operational Console. Open Administration, then select Service Accounts and choose New.

- Enter a name for the service account, assign a role, and use the magic wand to generate a unique ID. Select Next to continue.

- (Optional) Assign one or more quota limits to the service account.

- Select Finish to create the service account.

- After creation, the API key is displayed in the Client ID field when viewing the service account properties. Most service account settings can be modified later by selecting Edit.

API Explorer

The API Explorer provides a graphical interface for viewing, testing, and executing API calls and is accessible through the Operational Console.

- In the Operational Console, open the top-right menu and select Help > API Explorer.

- The API Explorer exposes the Swagger‑based JSON API and allows API calls to be executed on behalf of the currently signed-in user account.

Operational Console

When a user is logged into the Customer Portal, the Operational Console can be accessed by navigating to the Managed Solutions Portal, selecting Managed Private Cloud, and then selecting the desired region.

If the Operational Console is accessed directly through its URL and Sign In is selected, the user is redirected to the Customer Portal to authenticate with Customer Portal credentials. The Customer Portal also supports configuration of SAML-based federation with an organization’s identity provider, enabling users to access the Operational Console using their company credentials.

From the Operational Console, actions can be performed to create virtual machines, networks, and security rules.

vApp

A vApp is a group of virtual machines within a virtual data center, for example, representing an application landscape. Virtual machines can be managed as a group, such as taking a snapshot or restarting them, and individual virtual machines can also be managed separately within a vApp. Virtual machines and vApps can be assigned a lease period, after which they are automatically removed. The default lease is set to never expire.

A vApp can be created with or without virtual machines. Creating a vApp without virtual machines can be useful when setting up the vApp network before creating the virtual machines.

Create a new vApp

- Go to the main page under Applications > vApps, select Build New vApp, provide a name and description, and select Build. Wait for the process to complete.

- Options in the vApp window:

- Select Power to turn the vApp on, shut it down, restart it, or pause it. These actions are available only if the vApp contains a virtual machine.

- Select More to add or remove a virtual machine from the vApp.

- Select Details to view or modify additional information.

Virtual Machines

Virtual machines can be created either as part of a vApp or as standalone virtual machines. Creating virtual machines within a vApp is recommended. A vApp can contain up to 100 virtual machines, and virtual machines can be moved between vApps. Networks can also be created between virtual machines that reside in different vApps.

Creating virtual machines as part of a vApp provides several benefits, such as grouping virtual machines by task, function, or retention requirements; configuring startup and shutdown order; improving visibility of network configuration through the vApp network diagram; and enabling delegated management through role-based access.

Allocated storage resources and allocated GB RAM are counted as used storage under the assigned storage policy. When a virtual machine is started, compute resources are deducted from the compute quota of the organization virtual data center (OVDC).

The most common operating system installation method for a new virtual machine includes selecting an ISO file from the private or shared Equinix catalog, allowing the virtual machine to boot from the ISO file when powered on and begin the installation process. Another option is to create a new virtual machine and vApp from an OVF or OVA file on the administrator’s workstation during the creation workflow.

Create a virtual machine

- Go to Applications > Virtual Machines and select Create VM, or select More on a vApp and choose Add VM.

- Follow the steps in the creation dialog, then select OK and wait for the virtual machine to be created.

- Enter a name and computer name.

- Select New for the type.

- Choose whether the virtual machine should start automatically after creation.

- Select the OS family, guest OS, and boot image.

- Configure boot options, including EFI Secure Boot and boot setup.

- Configure Trusted Platform Module (TPM) settings, if required.

- Configure compute resources, including CPU, cores, and memory.

- Configure storage, including storage policy and disk size.

- Configure networking settings.

- In the Virtual Machines screen:

- Select Power to turn the virtual machine on or off, restart it, or pause it.

- Select More to mount installation media, manage snapshots, open the console, or delete the virtual machine.

- Select View to display or modify configuration details and additional settings.

Link installation media from catalog to a VM

If a catalog is already available and installation media has been uploaded, it can be attached to the virtual machine.

- Locate the virtual machine and select More.

- Select Insert Media and choose the installation file. The file is connected as a virtual CD-ROM.

- After the job completes, it is recommended to detach the installation file by selecting Eject Media.

VM Console

A virtual machine can be managed through the Operational Console. Two console types are available:

- Web Console which works from your browser.

- VM Remote Console which requires installing a plug-in.

Installation media (ISO) cannot be used directly from a local device. Media must be uploaded to a catalog before it can be mounted to a virtual machine.

The VM Remote Console plug-in for Windows devices is available in the shared catalog. For macOS and Linux (including Windows via Workstation), VMRC functionality is included in VMware Fusion Pro and VMware Workstation Pro. These applications require separate licensing and are not included with MPC or provided by Equinix.

- Locate the virtual machine from the vApp or the Virtual Machines overview.

- Select More and choose the desired console type: the Web Console (no plug-in required) or the VM Remote Console (plug-in required).

Snapshots

A snapshot captures the state of a complete virtual machine or vApp before an action is performed. The Operational Console allows only one snapshot at a time, with or without the active memory state. Taking a snapshot temporarily doubles the storage usage of the virtual machine or vApp. Snapshots can affect virtual machine performance. It is recommended to retain snapshots for a maximum of 2-3 days; snapshots older than one week are removed automatically. For longer-term preservation of a virtual machine state, create a clone or perform a backup of the virtual machine or vApp.

- Locate the virtual machine or vApp, select Actions > Snapshot, and choose Create Snapshot, then confirm.

- To restore the virtual machine to the snapshot state, select Revert to Snapshot and confirm.

- To delete the snapshot, select Remove Snapshot and confirm.

When a new snapshot is created from a VM with an existing snapshot, the old snapshot is removed. To have access to all snapshot options, VMware Tools must be installed on the VM.

Catalogs

The catalog in the Operational Console stores vApps, virtual machines, and media files (such as ISO images). Equinix provides a shared catalog with a set of ISO files. The shared catalog is associated with the OVDC, specifically the environment's metro.

A private catalog can also be created to store installation media or templates. Files can be uploaded directly, or templates can be created from existing virtual machines or vApps. Files stored in the private catalog count toward the OVDC storage quota. All uploaded software must comply with vendor licensing requirements and Equinix policies. The catalog may be used only for ISO and OVF files.

Create a catalog

To store installation media or templates, a catalog must first be created.

- On the home screen, select the menu icon (three horizontal lines).

- In the Content Hub screen, select Catalogs, then choose New.

- Enter a name and description for the catalog. Catalogs can be stored on any available storage profile. By default, the fastest profile is selected. To choose a specific storage profile, enable Pre-provision on a specific storage policy, select the ORGOVDC, and choose the required storage policy.

Add installation media

When the catalog is available, installation media can be uploaded to it.

- Go to Content Libraries > Media & Other and select Add.

- In the new screen, select the catalog, click the upload icon (arrow pointing up) to open the file browser. Select the installation file and confirm with OK.

- Edit the name if required and select OK to start the upload. In the Media & Other overview, a rotating indicator appears while the upload is in progress. A green check mark appears when the upload is complete. The file is then ready for use.

- Selecting the three dots next to the file name provides options to delete the file or download it to the workstation.

Create a vApp Template

A vApp template can be created by uploading a file from a local workstation or by using an existing vApp. A template for a single virtual machine based on a vApp can only be created if the vApp contains exactly one virtual machine.

- Locate the vApp to be used as the source, select More, and choose Add to Catalog. Select the destination catalog and enter a name. An exact copy can be created, or virtual machine settings can be adjusted if VMware Tools are installed before template creation.

- To access the vApp template, go to Content Hub > Catalogs and open the relevant catalog. Once available, the template can be used to deploy new virtual machines.

Shared Catalogs

Equinix provides a shared catalog (OS-CATALOG-1) per MPC metro that contains a set of operating system variants. Select the catalog associated with the metro where the virtual machine will be deployed. The shared catalog is associated with the MPC variant (FLEX or ST) in that metro. If both MPC FLEX and MPC ST are used in the same metro, two catalogs for that metro will be visible.

Connectivity and Networks

The Operational Console provides several options for connecting and accessing services within the organization or externally. Depending on the selected connectivity model, an OVDC is created with either Bridging (MPC Connectivity Customer Routed in the order) or Routing (MPC Connectivity Routed in the order).

The available network functions in the Operational Console vary depending on whether Customer Routed or Routed connectivity is selected.

In the Operational Console, two types of networks can be created through Self-Service:

- Isolated network (internally connected): An isolated network exists only for virtual machines within the OVDC.

- Routed network (externally connected): A routed network provides access to external networks outside the OVDC through the edge gateway.

| MPC Connectivity Type | Isolated | Routed |

|---|---|---|

| Bridging | Yes | No |

| Routing | Yes | Yes |

MPC Gateway Firewall Architecture

MPC uses a layered gateway firewall architecture that combines perimeter and workload-level controls to secure both North–South and East–West traffic. The MPC Firewall design includes two main types or layers of firewalls:

-

Gateway Firewalls (North–South): Protect the MPC perimeter and handle traffic entering or leaving the environment.

-

Distributed Firewall (East–West): Protect workloads at the vNIC level and provide micro‑segmentation within the OVDC.

Create an isolated OVDC Network

An organization virtual data center (OVDC) network enables virtual machines (VMs) to communicate with each other and, optionally, with external networks. A single OVDC can contain multiple networks.

- In the Operational Console Virtual Datacenters dashboard, select the OVDC where the network will be created.

- In the left navigation panel, select Networks.

- Click New.

- Under Scope, select the current OVDC for the isolated network.

- In the Network Type page of the New Organization VDC Network dialog box, select Isolated, then click Next.

- In the General page, enter a Name and Description for the network.

- In the Gateway CIDR field, select the IP space for the network from the dropdown list. This list is pre-populated by Equinix based on customer input during deployment.

- Dual Stack can be enabled when IPv6 is required.

- Guest VLAN Allowed can be enabled to permit multiple VLANs within connected VMs.

- Click Next.

- (Optional) In Static IP Pools, enter a range of IP addresses for VMs on this network and click Add. This allows the Operational Console to automatically assign IP addresses during VM creation. If not configured, IP assignment must be done manually during VM creation. Example: If the gateway address is 192.168.1.1/24, a static IP pool of 192.168.1.10–192.168.1.100 provides 91 usable IP addresses. Additional ranges can be added later if needed.

- When finished, click Next.

- (Optional) In the DNS page, enter DNS information, then click Next. If omitted, DNS settings must be entered manually when creating VMs.

- On the Ready to Complete page, review all settings and click Finish.

Create a routed OVDC Network

A routed OVDC network enables virtual machines (VMs) to communicate with each other and to external networks using Layer 3 (L3) routing. A single OVDC can contain multiple routed networks. The creation process differs depending on whether Distributed Firewall functionality is used.

- In the Operational Console Virtual Datacenters dashboard, select the OVDC where the network will be created.

- In the left navigation panel, select Networks, then click New.

- Under Scope, select Current Organization Virtual Data Center if Distributed Firewall is not being used.

- In the Network Type page of the New OVDC Network dialog box, select Routed, then click Next.

- Under Edge Connection, select the Edge Gateway that the routed segment will connect to.

- In the General page, enter a Name and Description for the network.

- In the Gateway CIDR field, select the IP space for the network from the dropdown list. This list is pre-populated by Equinix based on the customer inputs provided during deployment.

- Enable Dual Stack if IPv6 is required.

- Enable Guest VLAN Allowed to permit multiple VLANs within connected VMs.

- Click Next.

- (Optional) In the DNS page, enter DNS settings, then click Next. If not configured here, DNS information must be added manually during VM creation.

- On the Ready to Complete page, review the selections then click Finish. After the network has been created and connected to the OVDC, the Edge Gateway can be configured to control which traffic is allowed into and out of the OVDC.

When using a Distributed routed network:

- Traffic between Distributed networks is firewalled using the Distributed Firewall because it does not traverse the Edge Gateway.

- Traffic entering or leaving the environment passes through the Edge Gateway, where gateway firewalling applies.

- The Distributed Firewall requires the MPC Service Option for Distributed Firewall, which must be ordered as part of the MPC contract.

If Distributed Routing is enabled, Gateway Firewalls are not available for this network and the Distributed Firewall (DFW) must be used. North–South firewalling remains available. If Distributed Routing is disabled, only Gateway Firewalls are available for this routed network.

Guest VLAN Allowed

Enabling this option allows VLAN tags (802.1Q TAGs) to be configured on the network interfaces of virtual machines. This allows multiple VLANs to be operated on the same routed or isolated network segment.

Create Gateway Firewall Rules

The Operational Console provides a fully featured Layer 4 firewall to control traffic moving across security boundaries—both North-South (traffic between the OVDC and external networks) and East-West (traffic within and between OVDC networks). When specifying networks or IP addresses for firewall rules, the following formats are supported:

- A single IP address

- IP ranges (e.g., 192.168.1.10–192.168.1.50)

- CIDR notation (e.g., 192.168.2.0/24)

- Keywords: internal, external, or any

- In the Operational Console Virtual Datacenters dashboard, select the OVDC containing the Edge Gateway where the firewall rules will be created.

- In the left navigation panel, click Edges. The available Edge Gateways will appear on the right.

- Select the Edge Gateway to configure, then open the Firewall tab.

- In the Firewall tab, firewall rules can be created and managed.

- Click New to add a new rule. For the new rule, specify the following fields:

- Name

- Applications

- Context

- Source (IP address, IP sets, Static Groups)

- Destination (IP address, IP sets, Static Groups)

- Action (Allow, Drop, Reject)

- Protocol (IPv4, IPv6, or both)

- Logging

- Comments

- In the Source and Destination fields, define the addresses for the rule. To specify an IP or range, click IP and enter the Value, then click Keep. To reuse groups of IPs, go to Grouping Objects and click + to create an IP Set. This IP Set can then be selected for multiple rules.

- In the Application field, click + then in the Add Service dialog box, specify the Protocol, Source Port and Destination Port. Or define a custom protocol/port combination. When done, click Keep. Custom applications can also be created in advance and applied to firewall rules.

- Choose whether the rule’s Action is Accept (Allow) or Deny (Drop/Reject). If a syslog server is configured, select Enable logging.

- Click Save changes to finalize the rule.

An example of a firewall rule is to allow HTTPS traffic from the internet. This example uses allocated public IP addresses. The source is any (any IP address within the OVDC). The source port is also any. The destination is a private IP address and the destination port is 443 for HTTPS. For this to work you need a DNAT configuration.

Create NAT rules

Network Address Translation (NAT) allows the source or destination IP address to be changed so that traffic can pass through a router or gateway. The most common types of NAT on the Edge Gateway are:

- Destination NAT (DNAT) - changes the destination IP address of the packet.

- Source NAT (SNAT) - changes the source IP address of the packet.

Other options are:

- NO DNAT

- NO SNAT

- REFLEXIVE

For a virtual machine (VM) to access an external network resource from its OVDC, NAT may be required in certain use cases. In these cases, use one of the following options:

- Public internet IP addresses provided by Equinix.

- Private networks via MPC Connect.

- This functionality is primarily applicable when the Edge Gateway is configured with public IP addresses and VMs are using private IP addresses.

- NAT rules function only when the firewall is enabled. The firewall should remain enabled at all times.

DNAT changes the destination IP address of a packet and performs the reverse operation for reply traffic. DNAT can be used to expose a service running on a private network through a public IP address.

- In the Operational Console Virtual Datacenters dashboard, select the OVDC that contains the Edge Gateway where the DNAT rule is created.

- In the left navigation panel, click Edges. Select the NAT tab and add a new NAT rule.

- In the NAT section, click + DNAT Rule.

- In NAT Action, select the type of NAT rule.

- Enter an External IP (for example, 10.30.40.95).

- Enter an External Port (for example, 443).

- Enter an Internal IP (for example, 192.168.1.10).

- Advanced Settings

- State - enables or disables the rule

- Logging - logs the rule

- Priority - lower values mean a higher priority while 0 is the default value

- Firewall Match

- Match Internal Address

- Match External Address

- Bypass

- Applied To - leave blank

- If a syslog server is configured, select Enable logging.

- When finished, click Keep, then Save changes.

Create SNAT rules

SNAT changes the source IP address of a packet and performs the reverse operation for reply traffic. When accessing external networks, such as the internet, an SNAT rule is required to translate internal IP addresses to an address available on the external network.

- In the Operational Console Virtual Datacenters dashboard, select the OVDC that contains the Edge Gateway where the SNAT rule is created.

- In the left navigation panel, click Edges. Select the NAT tab and add a new NAT rule.

- In the NAT section, click + SNAT Rule.

- In NAT Action, select SNAT.

- External IP - the external network (usually named VCD_CUSTOMER_WAN).

- Internal IP - the network or IP address translated for internet access.

- Advanced Settings

- State - enables or disables the rule

- Logging - logs the rule

- Priority - lower values mean a higher priority while 0 is the default value

- Firewall Match

- Match Internal Address

- Match External Address

- Bypass

- Applied To - leave blank

- When finished, click Keep, then Save changes.

Create NO SNAT rules

NO SNAT rules negate existing SNAT rules. A NO SNAT rule can be created to bypass SNAT for traffic destined for a specific IP address. To ensure correct processing order, the NO SNAT rule must be assigned a higher priority (lower numerical value) than the SNAT rule. The default priority is 0, which is the highest priority.

- In the Operational Console Virtual Datacenters dashboard, select the OVDC that contains the Edge Gateway where the NO SNAT rule is created.

- In the left navigation panel, click Edges. Select the NAT tab and add a new NAT rule.

- In the NAT section, click + No SNAT Rule.

Configure IPsec VPN

Operational Console supports the following types of site-to-site VPN connections:

- An Edge Gateway within the same organization

- An Edge Gateway in another organization (Equinix or another vCloud service provider)

- A remote network that provides an IPsec VPN endpoint, such as a cloud service provider or a colocation environment

Depending on the connection type, IP addressing must be configured for both endpoints, along with a shared secret. The OVDC networks that are permitted to communicate over the VPN connection must also be specified.

Before configuring IPsec VPN settings, take note of the IP address of the Edge Gateway to be used as the tunnel endpoint.

- In the Operational Console Virtual Datacenters dashboard, select the OVDC that contains the Edge Gateway to be configured.

- In the left navigation panel, click Edges.

- On the Edges page, select the Edge Gateway.

- In the Services tab, locate the IPsec VPN option and click New to create a new IPsec VPN configuration.

Configure the edge gateway IPsec VPN settings

- Configure a name, enable Login to view logs, and leave the remaining options set to their default values.

- Peer Authentication Mode

- Pre-Shared Key: The shared secret used to authenticate and encrypt the connection. It must be an alphanumeric string between 32 and 128 characters and include at least one uppercase letter, one lowercase letter, and one number. This value must be the same on both sites.

- Certificate: To use a certificate, upload the certificate and the CA certificate.

-

Endpoint Configuration

Local Endpoint

- IP Address: The external IP address of the edge gateway.

- Networks: Enter the organization networks that can access the VPN. Separate multiple local subnets with commas.

Remote Endpoint

- IP Address: The external IP address of the remote site, on‑premises firewall, or edge gateway where the VPN is configured.

- Networks: The subnet on the on‑premises network that should be accessible from the OVDC. For example, if on‑premises networks use the 172.20.0.0/16 range, enter 172.20.0.0/16, or a smaller subnet such as 172.20.0.0/25.

- Remote ID:

- This value uniquely identifies the peer site and depends on the tunnel authentication mode. If not specified, the remote ID defaults to the remote IP address.

- Pre-shared Key: The remote ID depends on whether NAT is configured. If NAT is configured on the remote ID, enter the private IP address of the remote site. Otherwise, use the public IP address of the remote device terminating the VPN tunnel.

- Certificate: The remote ID must match the SAN (Subject Alternative Name) of the remote endpoint certificate, if present. If the remote certificate does not include a SAN, the remote ID must match the distinguished name of the certificate used to secure the remote endpoint.

-

When configuration is complete, click Keep to create the edge end of the VPN tunnel, then click Save changes.

Create the second VPN gateway

If this endpoint is in a different OVDC, repeat the steps to create the tunnel. After the tunnel is created, update firewall settings and validate the connection. If connecting to an external data center, configure the tunnel on those premises.

IKE Phase 1 and Phase 2

IKE (Internet Key Exchange) is a standard mechanism for establishing secure, authenticated communications. The following lists the supported configuration parameters for Phase 1 and Phase 2.

Phase 1 parameters

Phase 1 establishes peer authentication, negotiates cryptographic parameters, and generates session keys. Supported Phase 1 parameters are:

- Main mode

- AES/AES256/AES-GCM (user configurable)

- Diffie-Hellman group

- Pre-shared secret (user configurable)

- SA lifetime of 28800 seconds (eight hours), with no kbytes rekeying

- ISAKMP aggressive mode disabled

Phase 2 parameters

IKE Phase 2 negotiates the IPsec tunnel by generating keying material, either by deriving it from the Phase 1 keys or by performing a new key exchange. Supported Phase 2 parameters are:

- AES/AES256/AES-GCM (matches the Phase 1 setting)

- ESP tunnel mode

- Diffie-Hellman group

- Perfect forward secrecy for rekeying (only if enabled on both endpoints)

- SA lifetime of 3600 seconds (one hour), with no kbytes rekeying

- Selectors for all IP protocols and all ports between the two networks, using IPv4 subnets

Configure the edge gateway firewall for VPN

After the VPN tunnel is established, create firewall rules on the edge gateway for traffic passing over the tunnel.

- Create firewall rules for both traffic directions: data center to OVDC and OVDC to data center.

- For data center to OVDC, set:

- Source to the source IP range of the external OVDC or data center network

- Destination to the destination IP range of the OVDC network

- For OVDC to data center, set:

- Source to the source IP range of the OVDC network

- Destination to the destination IP range of the data center or VDC network

Validating the tunnel

After both ends of the IPsec tunnel are configured, the connection should establish automatically.

To verify tunnel status in the Operational Console:

- On the Edges page, select the edge to configure and click Configure Services.

- Select the Statistics tab, then select the IPsec VPN tab.

- For each configured tunnel, a tick indicates that the tunnel is operational. If a different status is displayed, review the tunnel configuration and firewall rules.

- Traffic can now be sent over the VPN.

After the tunnel is established, it can take up to two minutes for the VPN connection to be shown as active.

Create OVDC Distributed Firewall Rules

At the Org OVDC level, the distributed firewall can be used to apply micro‑segmentation via the Operational Console.

Using distributed firewall rules requires the MPC service option Distributed Firewall to be enabled.

Before you begin

- IP Set: Used as a source or destination in a rule. Create an IP Set for use in firewall rules and DHCP relay configuration. IP Sets are commonly used to group resources outside the VCD organization, such as the internet or a company WAN. In these cases, IP addresses or subnets are used.

- Static Groups: A static group contains one or more networks. When a static group is used in a distributed firewall rule, the rule applies to all VMs connected to the networks in the group.

- Dynamic Groups: Used to group VMs based on VM name, security tag, or both. By default, a dynamic group contains one criterion and one rule. It can be extended to up to three criteria, with up to four rules each.

Create distributed firewall rules

- In the Operational Console Virtual Datacenters dashboard, select the OVDC that contains the distributed firewall to configure.

- In the left navigation panel, click Networking / Datacenter Groups / Distributed Firewall.

- Click + to add a new row to the firewall rules table.

- For the New Rule, specify a Name.

- In the Source and Destination fields, specify the source and destination addresses for the firewall rule.

-

Firewall groups

-

IP Sets: Select NEW, then provide a name, description, and IP range or CIDR.

-

Static Groups: Select NEW, provide a name, and click Save.

Select Manage members to add new members.

Select a network to add to the static group.

The Associated VMs view shows which VMs are connected to the attached networks.

-

Dynamic Groups: Select NEW, provide a name, and select the criteria type to use.

-

Firewall IP Addresses: Add an IP address, CIDR, or range, then select Keep.

- Select Action (Allow, Drop, or Reject).

- Select IP Protocol (IPv4, IPv6, or both).

- Configure Logging, if required.

- Select Save.

Service Description

Service Options

MPC ST includes several service options that can be ordered separately.

Stretched Cluster

The Service Option “Stretched Cluster” is available in selected locations. A stretched cluster is deployed across two datacenters in the same metro with synchronous storage replication, resulting in an RPO=0 configuration.

The cluster must have an equal number of hosts in each location. One location in the metro is designated as the primary location and the other as the secondary location.

An Organizational Virtual Datacenter (OVDC) provides a logical environment with vCPU, GB RAM, storage resources, and network capabilities on which customers can define VMs. In a stretched cluster configuration, three types of OVDCs can be selected:

- VMs run on the primary location when available; if not available, the VMs move to the secondary location.

- VMs always run on the primary location; when the primary location is not available, the VMs do not move to the secondary location.

- VMs always run on the secondary location; when the secondary location is not available, the VMs do not move to the primary location.

Customers can define one or more OVDCs in an MPC ST environment. The vCPU is available in three variants:

- Full – one core is equivalent to one vCPU

- High – one core is equivalent to two vCPU

- Standard – one core is equivalent to four vCPU

An OVDC can use a maximum of three available storage profiles:

- Ultra Performance

- High Performance

- Standard Performance

Customers can combine multiple OVDCs in different variants and sizes for different purposes. Security policies can be configured on the virtual networks, and OVDCs can be interconnected. For more information about OVDCs, see Virtual Data Center.

The connectivity and network options of the non‑stretched MPC ST service also apply to the stretched cluster. Networking and connectivity to access MPC ST workloads must be procured as part of Connectivity and are not included in the Service Option Stretched Cluster.

Included in the service:

- Network for synchronous data replication between the primary and secondary location; this network cannot be used for customer networking.

- Network for NSX connectivity.

- A third location for the witness of availability of the primary and secondary location.

| PURCHASE UNIT | BILLING TYPE | UOM | DESCRIPTION |

|---|---|---|---|

| Service option Cluster Stretched Primary | Baseline | Per Cluster | Capability for stretched cluster with synchronous data replication for the primary location |

| Service option Cluster Stretched Secondary | Baseline | Per Cluster | Capability for stretched cluster with synchronous data replication for the secondary location |

Both service options must be purchased for a stretched cluster.

Backup and Restore

Backup and restore of MPC resources are provided through the Managed Private Backup Service, which is ordered separately. The service includes backup of VM data or application data.

Software Licensing

A catalog of software ISOs for MPC is available in the self-service portal. The catalog lists software that can be used in VMs running in the OVDCs and includes both open source and licensed software. Licensed software can be purchased through the Software Licensing product.

Bring Your Own License

Instead of purchasing licenses through the service, customers may bring their own software licenses. In this case, the software vendor license rules must be validated. The customer is responsible for meeting all software vendor compliance requirements.

Support Plan

The support plan provides an optional service that covers additional service requests and other services such as extended support, additional reporting, and design support.

The Managed Solutions Premier Support Plan is a prepaid program that allows the purchase of monthly or annual (one‑time payment) blocks of support hours at a discount. Support hours are consumed in increments of fifteen (15) minutes.

Without a prepaid Managed Solutions Premier Support Plan, support is charged at the standard hourly rate under the Premier Support Service per hour (standard hourly rate). Support hours are calculated in increments of fifteen (15) minutes.

| PURCHASE UNIT | TYPE | CHARGE TYPE | UOM | ORDERING AND BILLING |

|---|---|---|---|---|

| Technical Support Plan | Monthly | Baseline | hour | Monthly reservation of hours for technical support |

| Technical Support Plan | Annual | Baseline | hour | Yearly reservation of hours for technical support |

The plan is not designated to one specific Managed Solutions product but applies to all Managed Solutions products purchased.

If all hours in the plan have been consumed, any additional hours will be charged at the standard hourly rate under the “Premier Support Service.”

Monthly or prepaid Managed Solutions Premier Support Plan hours do not roll over and are forfeited if not used. Usage beyond the pre‑purchased amount is billed at the regular “Premier Support Service” hourly rate unless an upgrade is requested.

The plan is country‑specific and cannot be linked to a specific IBX data center.

Migration Support

To migrate workloads from on‑premises environments to MPC, migration tooling is provided to support self‑service workload migration without application refactoring. The tooling supports VMware workloads from vSphere or Cloud Director. To enable migration, the customer receives an appliance that can be installed in the customer VMware environment and paired with the customer MPC environment. The tooling supports asynchronous replication.

By default, the migration tooling supports migration over the internet. On request, a private connection can be created over Equinix Fabric; costs for the Fabric connection are charged through Fabric. For migration over Fabric, existing virtual circuits cannot be used because they must be dedicated to migration. Internet migration supports speeds up to 250 Mbps, while Fabric connections support speeds up to 10 Gbps. The use of the migration tooling is free of charge; additional support can be requested through the Support plan. After the migration has been completed, the tooling will be disabled.

| Connection | Speed | Network Configuration |

|---|---|---|

| Internet | Up to 250 Mbps | No |

| Fabric | Up to 10 Gbps | Yes, setup VLANs |

After migration is completed, the migration tooling is disabled.

Service Demarcation and Enabling Services

MPC ST is a managed service with predefined design choices. As a result, not all VMware technical options are available in the MPC ST service. The following topics are commonly requested but are not supported.

Access

- Access to vSphere or vCenter functions other than through the MPC Operational Console and Operational Console API

Compute

- Create more than one snapshot

Virtual Disks

- Move virtual disks between VMs through the MPC Operational Console or API. To move virtual disks between VMs, a support ticket must be created with the Equinix support desk

- Share a virtual disk between multiple VMs

- Use of Microsoft Windows Server Failover Clustering (WSFC) with shared disks

Network

- Use of Single Root I/O Virtualization (SR-IOV) and physical NIC access from the VM

- Use of promiscuous mode on the vNIC

- Use of trunking in the OVDC for networks connected to customer-owned routers

- Connect to MPC through redundant layer-2 solutions such as Metro Connect

Purchase Units

The MPC service is charged based on baseline values or baseline with overage charge types.

- Baseline - the specified volume of the unit of measure for the service, as defined in the order.

- Overage - the quantity of the service consumed by the customer that exceeds the contracted baseline volume.

Catalog of Purchase Units

| Category | Purchase Unit | UOM | Install Fee | Billing Method | Overage |

|---|---|---|---|---|---|

| MPC Service | Connectivity – Routed | Each | Baseline | ||

| Connectivity – Customer Routed | Each | Baseline | |||

| Connectivity – Managed Firewall | Each | Baseline | |||

| Connectivity – Routed Joined | Each | Baseline | |||

| MPC Compute | Host | Host | Yes | Baseline | |

| MPC Service Option | Network – Managed Virtual Circuit xx Mbps | Each | Yes | Baseline | |

| Network – Managed Internet Access xx Mbps | Each | Baseline | |||

| Network – Additional IP space (/24 /25 /26 /27 /28 /29) | Each | Baseline | |||

| Network – External Network | Each | No | Baseline | ||

| Network – Distributed Firewall (per Core) | Core | Baseline | |||

| Disaster Recovery (Gold, Silver, Bronze) | VM | No | Baseline | Yes |

Roles and Responsibilities

During service fulfillment and delivery, responsibilities are shared between Equinix and the customer. The following section provides an overview of these responsibilities.

Tenant Provisioning

| Activities | Equinix | Customer |

|---|---|---|

| Schedule / execute project kickoff meeting | RA | CI |

| Schedule / execute customer onboarding | RA | CI |

| Delivery of the hosts in a cluster in accordance with the order | RAC | I1 |

| Delivery of the OVDCs in accordance with design | RAC | I1 |

| Delivery of the agreed storage capacity in accordance with design | RAC | I1 |

| Delivery of the connectivity type in accordance with the order | RAC | I1 |

| Delivery of the Managed Virtual Circuits in accordance with the order | RAC | C |

| Initiation of Unmanaged Virtual Circuits in Fabric portal | I | RAC |

| Configuration of customer‑initiated Virtual Circuits | RAC | C |

| Delivery of the Managed Internet Access in accordance with the order | RAC | C |

| Delivering the agreed network functionality in accordance with design (optional) | RAC | C |

| Delivery of the MPC Operational Console | RAC | I1 |

| Delivery of the Admin account for the Operational Console | RAC | I1 |

Acceptance Into Service

After onboarding activities are completed, testing activities confirm whether the product has been delivered successfully and is ready for billing.

| Activities | Equinix | Customer |

|---|---|---|

| Test access to MPC Product page on Managed Solutions Portal | CI | RA |

| Test access to MPC operational console | CI | RA |

| Confirm MPC fulfillment based on preview evidence | CI | RA |

| Set product as enabled for customer on internal systems | RA | I |

Operational

Once the Managed Private Cloud service is enabled for customers, the following operational items apply:

| Activities | Equinix | Customer |

|---|---|---|

| Technical management of the service (overall) | RAC | I¹ |

| Functional management of the customer environment within the service (overall) | I² | RAC |

| MPC infrastructure monitoring and maintenance | RA | I |

| Create, import, and manage VMs and vApps | I² | RAC |

| Scale VMs up and down | I² | RACI |

| Manage VM snapshots | RACI | |

| Manage access to VMs with console | RACI | |

| Create and manage library with customer-owned ISO/OVA files | RACI | |

| Separate or group VMs for availability or performance | I² | RAC |

| NFV: Standard firewalling | I² | RAC |

| NFV: Routing (static) | I² | RAC |

| NFV: Routing | RAC | I |

| NFV: NAT | I² | RAC |

| NFV: DHCP | I² | RAC |

| NFV: VPN (IPsec) | I² | RAC |

| Setup and manage scripting and automation capabilities | RACI |

RACI stands for Responsible, Accountable, Consulted, and Informed.

- Informing is only mandatory for tasks that have an impact on the functioning of the user environment.

- Informing is only required for tasks that have an impact on the operation and/or management of the service.

Incident Management

Incident management is included in the service support. All incidents are handled based on priority. Priority is determined after the incident is reported and assessed by Equinix based on the information provided.

| Priority | Impact / Urgency | Description |

|---|---|---|

| P1 High | Unforeseen unavailability of a service / environment delivered and managed by Equinix, in accordance with the service description due to a disruption. The user cannot fulfill its obligations towards its users. The user suffers direct demonstrable damage due to the unavailability of this functionality. | The service must be restored immediately; the production environment(s) is/are unavailable, with platform‑wide disruptions. |

| P2 Medium | The service does not offer full functionality or has partial functionality or reduced performance, because of which the users are impacted. The user suffers direct demonstrable damage due to unavailability of the functionality. The service may be impacted due to limited availability of this functionality. | The service must be repaired the same working day; the environment is not available. |

| P3 Low | The service functions with limited availability for one or more users and there is a workaround in place. | The moment of repair of the service is determined in consultation with the reporting person. |

Note: This classification does not apply to disruptions that are, for example, caused by user-specific applications, actions by the user, or dependent on third parties. The incidents can be submitted through the Customer Portal under Managed Solutions. P1 incidents need to be submitted by phone.

Service Requests

Service requests are used to report service issues or to request assistance with implementation or configuration changes. Standard configuration changes can be requested through the MPC self‑service portal as a Service Request. Support is available 24×7×365. Two types of service requests are available:

- Included - Service requests that are within the scope of the service and do not incur additional charges.

- Additional - Service requests that are outside the scope of the service and incur additional charges.

| Request Name | Included / Additional |

|---|---|

| Create a DC Group over multiple OVDCs | Additional |

| Change external access OVDC API | Additional |

| Add a user for the Operational Console | Included |

| Remove a user from the Operational Console | Included |

| Change permissions for a user | Included |

Changes not listed above can be requested by selecting Change in the service request module. Equinix will perform an impact analysis to determine feasibility, cost, and lead time. Charges related to service requests are deducted from the Premier Support Plan balance. If the balance is insufficient, charges are invoiced at the prevailing rate. Requests that impact baseline capacity, ordered quantities, or any changes impacting the monthly service fee must be requested through the Equinix Sales team.

Reporting

As part of the service, the customer will receive monthly service reporting covering the following topics:

- Raised tickets measured against SLA parameters

- Capacity per OVDC

Service Levels

The Service Level Agreement (SLA) defines the measurable performance levels applicable to the MPC service and specifies the remedies available to the customer if Equinix fails to meet these levels. The service credits listed below are the sole and exclusive remedy for failure to meet the service level thresholds defined in this section.

| Priority | Response Time¹ | Resolution Time² | Execution of Work | SLA³ |

|---|---|---|---|---|

| P1 | < 30 min | < 4 hours | 24x7 | 95% |

| P2 | < 60 min | < 24 hours | 24x7 | 95% |

| P3 | < 120 min | < 5 days | 24x7 | 95% |

¹ Response time is measured from the moment a trouble ticket is submitted until an Equinix Managed Solutions specialist provides a formal response.

² Resolution time is measured from ticket creation to closure, cancellation, or handover to IBX Support.

³ SLA applies to the response time, details on the SLA can be found in the Product Policy.

The Availability Level of the MPC service refers to the availability of a single OVDC. The MPC service is considered Unavailable when a failure in infrastructure managed by Equinix causes the OVDC to enter an error state and directly results in an interruption to the customer’s services.

| Availability Service Level | Description |

|---|---|

| 99.95%+ | Achieved when total OVDC unavailability is less than 22 minutes over a calendar month. |

A service credit regime applies to the availability SLA as defined in the Product Policy. Availability of the MPC service does not include data restoration. Customers are responsible for restoring their data. When Managed Private Backup is contracted, data can be restored through self-service using the Managed Private Backup Operational Console. If Managed Private Backup is not contracted, data restoration is the responsibility of the customer.