Managed Private Cloud Core

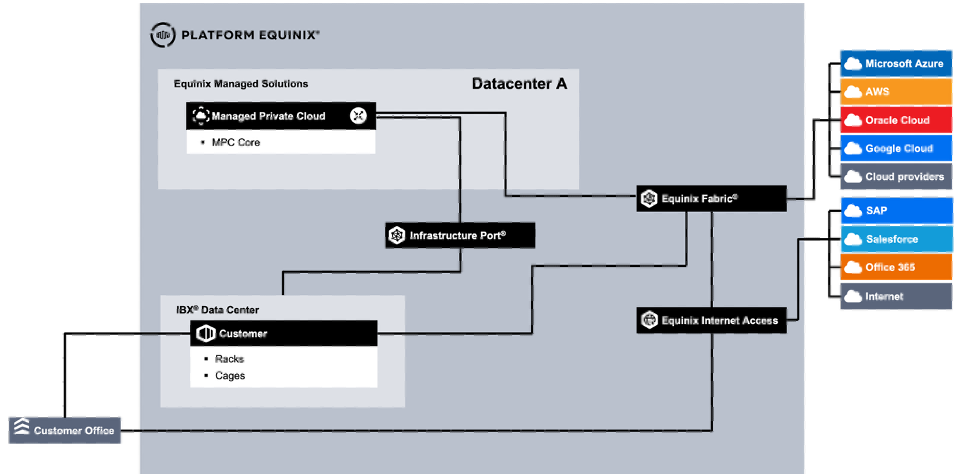

MPC Core is a dedicated, VMware Cloud Foundation (VCF)-based option for customers who need full-stack control within a managed environment. MPC Core provides a dedicated infrastructure with compute, storage, and network resources in a dedicated Secure Cabinet Express in an Equinix datacenter. The MPC Core service is based on and aligned with the VMware Cloud Foundation architectural blueprints. Connectivity is supported for Layer-2 and Layer-3 networks through Equinix Fabric or direct connections using Equinix Metro, Campus, and/or Cross Connect. Equinix manages the full stack, including VCF, while customers configure their infrastructure within the boundaries of a VCF deployment. Customers can choose to include the VCF license in the service or use the BYOL option from Broadcom.

MPC Core is available in selected datacenters globally. When procured in different regions (AMER, EMEA, APAC), an independent implementation is delivered for each region. When multiple implementations are delivered within the same region, Active Directory and DNS can be shared between those implementations.

MPC Core Blueprints

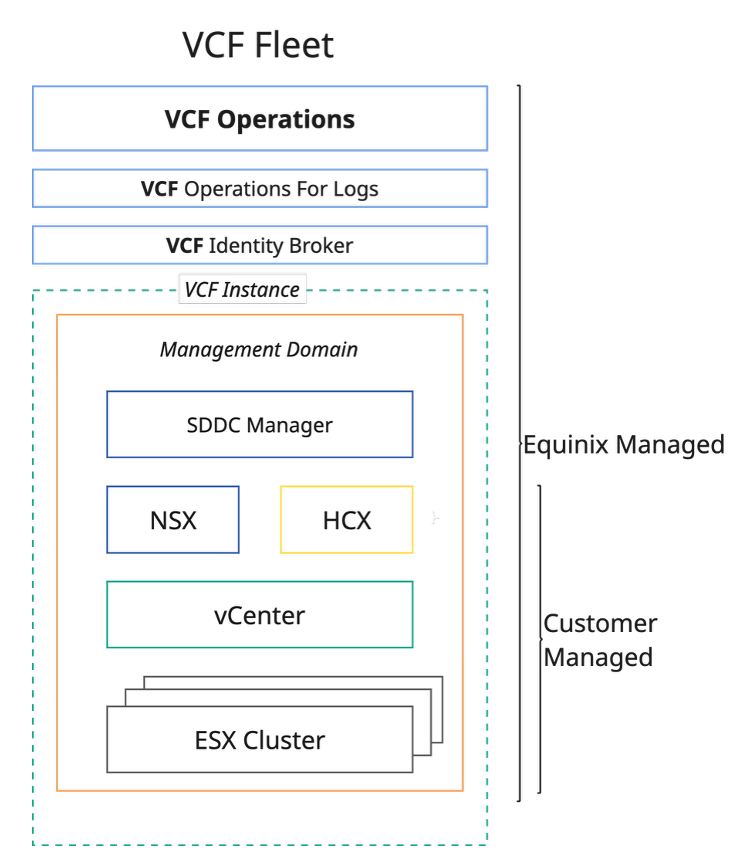

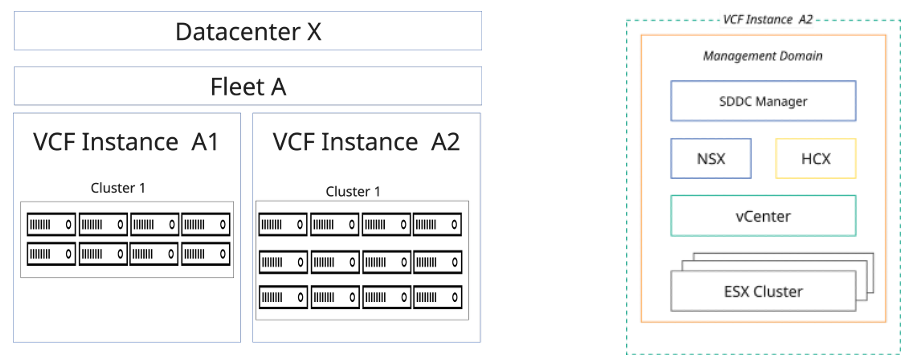

The MPC Core design is based on the VMware VCF blueprint VCF Fleet in a Single Site with minimal footprint, with HA enabled at the management component level (clustered implementations). VCF management components and customer resources run in a single cluster. This blueprint provides a management domain containing one cluster with a resource pool for management components and a resource pool for customer resources.

The management domain is additionally equipped with VCF Operations HCX to support migration to the MPC Core platform. The management domain is controlled by Equinix. The resource pool contains customer resources, and the available capacity depends on the installed management components, the number of hosts in the cluster, and the host type.

Storage is provided by vSAN. For connectivity, a customer can use NSX and/or install a self-managed routing appliance. A customer may also use the Managed Firewall service, which is an Equinix-managed firewall offering that must be ordered separately (Option Connectivity Managed Firewall).

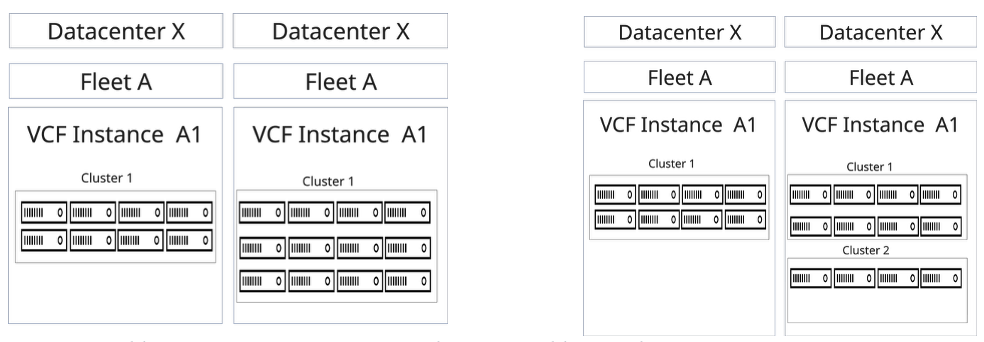

A fleet is an architectural construct that groups multiple VCF instances to support centralized management and self-service operations. The first MPC Core instance in a datacenter is a VCF fleet containing a single VCF instance. This design supports up to 5 clusters, 100 hosts, or 1000 VMs by default. When additional scaling is required, some management components must be increased in size, and this capacity is deducted from the customer resource pool.

The installed software included in the MPC Core VCF Fleet in a Single Site is:

- VCF Installer

- VCF Fleet Management

- VCF Operations

- VCF Operations Cloud Proxy

- vCenter

- NSX Managers

- NSX Edges

- VCF Operations for Logs

- VCF Identity Broker

- VCF Operations HCX

- Core Management Infrastructure (Active Directory Domain Services, DNS, Certificate Authority, NTP Monitoring, Jump Host)

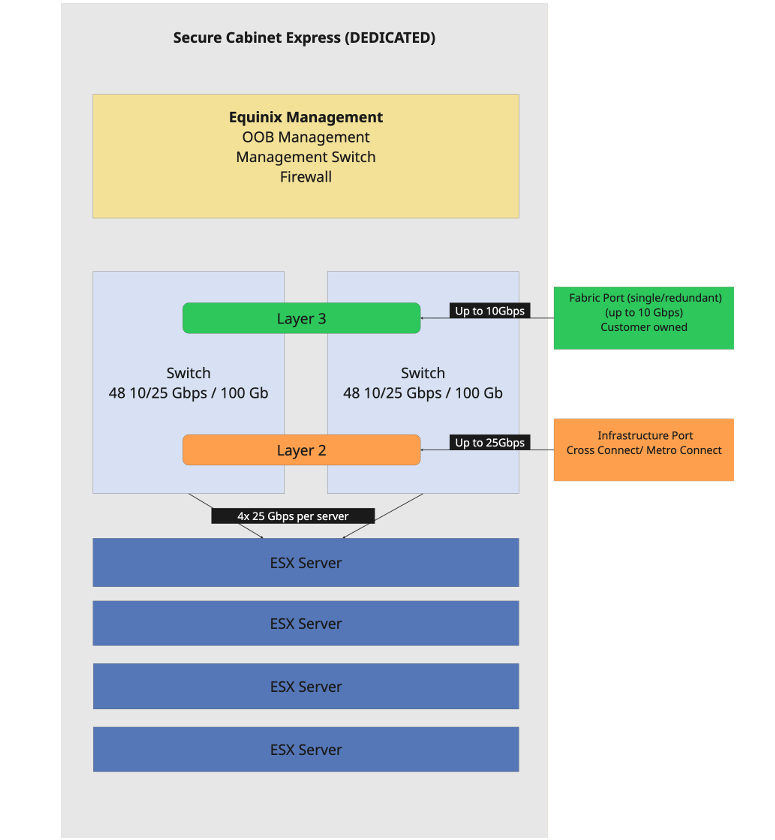

MPC Core Cabinet

MPC Core is delivered from an Equinix datacenter in a dedicated Secure Cabinet Express. Depending on the size of the cluster, a cluster can span multiple cabinets. The cabinet and power are included in the host price. The cabinet and access are managed by Equinix and are not available for customer equipment. The maximum number of hosts per cabinet depends on power availability and on the number and power consumption of the servers.

MPC Core infrastructure

For management of MPC Core, the first cabinet is equipped with management firewalls, out‑of‑band (OOB) management capabilities, and management switches. Every cabinet includes two redundant switches with 2×48 25 Gbps ports for external connectivity and server connectivity. Each server uses two ports from each switch for redundancy. The switches are managed by Equinix. When two cabinets are deployed, the switches are redundantly interconnected between the cabinets, providing 400 Gbps of bandwidth.

MPC Core Cluster

- Cluster - An MPC Core environment includes a cluster configuration of at least seven hosts, in which six hosts are available for use and one host is reserved for maintenance and high availability (N + 1 principle).

- Host - MPC Core provides a catalog of host types based on CPU, cores, RAM, and storage.

| Host | Host Type | Billing Type | Description |

|---|---|---|---|

| AHL-16L05V4#4 | Generic Host | Baseline | 16C, 512GB RAM, 4*3.84TB NVME |

| AHL-16L05V6#4 | Generic Host | Baseline | 16C, 512GB RAM, 6*3.84TB NVME |

| AHL-32L05V8#4 | Generic Host | Baseline | 32C, 512GB RAM, 8*3.84TB NVME |

| AHL-32L05V6#8 | Generic Host | Baseline | 32C, 512GB RAM, 6*7.68TB NVME |

| AHL-32L05V8#8 | Generic Host | Baseline | 32C, 512GB RAM, 8*7.68TB NVME |

| AHL-32L05V6#15 | Generic Host | Baseline | 32C, 512GB RAM, 6*3.68TB NVME |

| AHL-32L10V8#4 | Generic Host | Baseline | 32C, 1024GB RAM, 4*15.36TB NVME |

| AHL-32L10V6#8 | Generic Host | Baseline | 32C, 1024GB RAM, 6*7.68TB NVME |

| AHL-32L10V8#8 | Generic Host | Baseline | 32C, 1024GB RAM, 8*7.68TB NVME |

| AHL-32L10V6#15 | Generic Host | Baseline | 32C, 1024GB RAM, 6*15.36TB NVME |

| AHL-64L10V8#8 | Generic Host | Baseline | 64C, 1024GB RAM, 8*7.68TB NVME |

| AHL-64L10V6#15 | Generic Host | Baseline | 64C, 1024GB RAM, 6*15.36TB NVME |

| AHL-64L20V8#8 | Generic Host | Baseline | 64C, 2048GB RAM, 8*7.68TB NVME |

| AHL-64L20V6#15 | Generic Host | Baseline | 64C, 2048GB RAM, 6*15.36TB NVME |

Note: The cluster hosts are committed for the full contracting period. Every MPC Core cluster must use hosts of the same type. MPC Core limits of the platform are defined at the VCF solution level.

Cluster Sizing

Cluster sizing should be based on the required compute capacity and availability. A cluster consists of net consumable capacity in the number of hosts (N) and includes spare capacity for availability, recovery, and maintenance. The spare capacity required depends on the cluster size.

- Minimal cluster size is 7 hosts (N+1).

- Cluster sizes above 15 hosts require a second spare host (N+2).

Cluster Sizes and Net Capacity

Capacity for spare servers is reserved to ensure availability. Spare nodes cannot be used while all active nodes remain operational, and the capacity they provide is not included in the calculated available capacity. The overview below describes example cluster sizes, including minimum and maximum sizes, and the associated usable net capacity expressed in CPU cores and GB RAM. Vendor‑specified overhead must be subtracted from the capacity of the first cluster. The advised sizing is based on vendor recommendations:

-

Compute

- N = N+1

- Overhead for hypervisor

- Maximum 80% average use of CPU and memory

-

Storage

- Overhead for vSAN rebuild and operations reserve

| MPC Core Cluster Size | Hypervisor Server Model | Spare Servers | Usable Capacity | Advised Capacity |

|---|---|---|---|---|

| 7 (6+1) minimum | 16C, 512 GBRAM | 1 | 42 CPU Cores 1350 GBRAM | 39 CPU Cores 1220 GBRAM |

| 32C, 512 GBRAM | 1 | 90 CPU Cores 1350 GBRAM | 77 CPU Cores 1220 GBRAM | |

| 32C, 1024 GBRAM | 1 | 90 CPU Cores 2850 GBRAM | 77 CPU Cores 2450 GBRAM | |

| 64C, 1024 GBRAM | 1 | 180 CPU Cores 2850 GBRAM | 154 CPU Cores 2450 GBRAM | |

| 64C, 2048 GBRAM | 1 | 180 CPU Cores 5700 GBRAM | 154 CPU Cores 4910 GBRAM | |

| 14 (13+1) | 16C, 512 GBRAM | 1 | 182 CPU Cores 5850 GBRAM | 167 CPU Cores 5320 GBRAM |

| 32C, 512 GBRAM | 1 | 390 CPU Cores 5850 GBRAM | 333 CPU Cores 5320 GBRAM | |

| 32C, 1024 GBRAM | 1 | 390 CPU Cores 12350 GBRAM | 333 CPU Cores 10640 GBRAM | |

| 64C, 1024 GBRAM | 1 | 780 CPU Cores 12350 GBRAM | 666 CPU Cores 10640 GBRAM | |

| 64C, 2048 GBRAM | 1 | 780 CPU Cores 24700 GBRAM | 666 CPU Cores 22930 GBRAM | |

| 16 (14+2) | 16C, 512 GBRAM | 2 | 196 CPU Cores 6300 GBRAM | 180 CPU Cores 5730 GBRAM |

| 32C, 512 GBRAM | 2 | 420 CPU Cores 6300 GBRAM | 359 CPU Cores 5730 GBRAM | |

| 32C, 1024 GBRAM | 2 | 420 CPU Cores 13300 GBRAM | 359 CPU Cores 11460 GBRAM | |

| 64C, 1024 GBRAM | 2 | 840 CPU Cores 13300 GBRAM | 717 CPU Cores 11460 GBRAM | |

| 64C, 2048 GBRAM | 2 | 840 CPU Cores 26600 GBRAM | 717 CPU Cores 22930 GBRAM |

Note: The MPC Core management stack and VCF overhead (hypervisor, vSAN, NSX) are deducted from available capacity. Sizing should target a maximum of 80% utilization to avoid future growth limitations.

MPC Core Storage

In MPC Core, storage is included in the host and based on vSAN technology. The raw capacity of the cluster is available to the customer. Data redundancy policies depend on the number of hosts in the cluster. Vendor‑specified overhead must be deducted from the cluster capacity. Customers can select their preferred RAID configuration. vSAN is configured with encryption at rest and encryption in transit. Customers are responsible for ensuring that enough space is available for maintenance operations.

The following table presents the guaranteed capacity based on six disks in each server. The expected capacity can be up to a factor of 1.2 higher, depending on generic VM data characteristics, compressibility, and data that is not customer‑encrypted, according to platform averages and vendor‑recommended policies.

| Servers in Cluster | Capacity with Disk Size in TB (3.84) | Capacity with Disk Size in TB (7.68) | Protection |

|---|---|---|---|

| 4 | 24.6 | 53.43 | RAID-5 (Single Parity / 2+1 Scheme / 50% RAID overhead) |

| 5 | 34.09 | 73.47 | RAID-5 (Single Parity / 2+1 Scheme / 50% RAID overhead) |

| 6 | 52.29 | 112.19 | RAID-5 (Single Parity / 4+1 Scheme / 25% RAID overhead) |

| 7 | 53.06 | 113.53 | RAID-6 (Dual Parity / 4+2 Scheme / 50% RAID overhead) |

| 8 | 62.55 | 133.56 | RAID-6 (Dual Parity / 4+2 Scheme / 50% RAID overhead) |

| 9 | 72.03 | 153.59 | RAID-6 (Dual Parity / 4+2 Scheme / 50% RAID overhead) |

| 10 | 81.51 | 173.62 | RAID-6 (Dual Parity / 4+2 Scheme / 50% RAID overhead) |

| 11 | 90.99 | 193.65 | RAID-6 (Dual Parity / 4+2 Scheme / 50% RAID overhead) |

| 12 | 100.47 | 213.68 | RAID-6 (Dual Parity / 4+2 Scheme / 50% RAID overhead) |

| 13 | 109.95 | 233.71 | RAID-6 (Dual Parity / 4+2 Scheme / 50% RAID overhead) |

| 14 | 119.43 | 253.74 | RAID-6 (Dual Parity / 4+2 Scheme / 50% RAID overhead) |

| 15 | 128.91 | 273.77 | RAID-6 (Dual Parity / 4+2 Scheme / 50% RAID overhead) |

VMware Licensing

MPC Core supports the use of customer‑owned VCF licensing from Broadcom/VMware or licenses provided by Equinix. Bring‑Your‑Own‑License (BYOL) usage is subject to Broadcom compliance requirements. To use BYOL licensing for an MPC Core instance, the licenses must be shared with Equinix and included in Equinix reporting to Broadcom.

MPC Core Connectivity

To integrate MPC Core into customer (multi) cloud architectures, it can be connected to different connectivity options. Two aspects must be considered when determining how MPC Core connects to external resources:

- The connectivity type (layer 2 or layer 3)

- The routing (Equinix provided or customer provided)

MPC Core external connectivity supports the following:

-

BGP-based layer-3 a. Equinix routed using Equinix Fabric™, Equinix Cloud Router™, or Equinix Network Edge™

b. Customer-provided routing using Cross Connect™, Metro Connect™, or Infrastructure Port™ -

Layer-2 via Infrastructure Port™, Cross Connect™, or Metro Connect™ a. Non-redundant using a single connection

b. Redundant, using LACP-based dual connections not via Fabric

Layer-2 and layer-3 connections are both supported within a single MPC Core implementation.

| Product | Layer-3 | Layer-2 | Layer-2 redundant with LACP |

|---|---|---|---|

| Fabric | X | X | - |

| Network Edge | X | X | - |

| Cloud Router | X | X | - |

| Cross Connect | X | X | X |

| Metro Connect | X | X | X |

-

Connectivity Routed - In this variant, the NSX network solution included in the VCF suite is used as the routing and virtual network function. Two NSX Edge appliances, deployed in the Large size, are installed by Equinix in the customer resource pool and configured with customer‑provided BGP. All layer‑3 connectivity is terminated at the NSX Edge router. The Edge is connected using two VLANs for external connectivity. Layer‑2 connections are terminated in a port group in vCenter. With connectivity routed, the available network functions are limited to stateless firewalling.

When stateful firewalling or distributed firewalling is required, additional service options can be ordered, including Gateway Firewall and Distributed Firewall.

-

Connectivity Customer Routed - In this variant, the customer provides a licensed routing appliance. NSX components are still installed as required by the VCF deployment. All routed external connections are terminated at the customer‑provided routing appliance. The appliance connects using two VLANs for external connectivity. Layer‑2 connections are terminated in a port group in vCenter.

With customer‑provided routing, the Distributed Firewall can be used as an optional feature, licensed through the service option.

-

IP Addressing and VLANs - Equinix uses default IP ranges and VLANs for the MPC Core instance. Customers may supply their own IP addresses and VLANs. If a conflict occurs, Equinix will assign an alternative range in consultation with the customer.

The service is configured using IPv4. Customers can use IPv4 and IPv6 for their workloads if supported by the VMware VCF solution.

NSX Networking Functions

VCF and NSX provide a catalog of networking functions. Some functions are included as part of the VCF license, while others require additional per‑core licensing.

| Type | Description | Edge Cluster Required | Extra License |

|---|---|---|---|

| Edge | Basic network functions | Yes | No |

| Gateway Firewall | Stateful Firewall | Yes | Yes |

| Distributed Firewall | East‑West protection | No | Yes |

To use NSX networking functions, the Connectivity Routed option must be selected. With this configuration, NSX functions become available to the customer. Edges are deployed in the customer cluster and consume resources from that cluster. The Edge type determines available features and performance.

| Edge Type | Size | Use Case |

|---|---|---|

| Edge Medium | Two edges in HA with 4 vCPU, 16 GB RAM, 300 GB storage each | - L2–L4 features - Expected bandwidth up to 2 Gbps - L3–L4 Firewall - NAT - Routing |

| Edge Large | Two edges in HA with 8 vCPU, 16 GB RAM, 300 GB storage each | - L2–L4 features - Expected bandwidth up to 10 Gbps - L3–L4 Firewall - NAT - Routing - Bridging - VPN - TLS Inspection |

| Edge X‑Large | Two edges in HA with 16 vCPU, 32 GB RAM, 300 GB storage each | - L2–L7 features - L3–L4 Firewall - NAT - Routing - Bridging - VPN - TLS Inspection - L7 Access Profile (URL filtering) - IDS / IPS - Malware prevention |

The Gateway Firewall provides a software‑defined Layer 2–7 firewall designed to secure virtualized workloads in a private cloud. It supports stateful firewalling with threat prevention capabilities. Advanced threat protection (ATP) combines multiple detection technologies, including Intrusion Detection and Prevention (IDS/IPS), network sandboxing, and network traffic analysis (NTA), along with aggregation, correlation, and context engines from network detection and response (NDR). This function requires additional licenses that can be ordered through the Gateway Firewall service option. The number of required licenses depends on the size of the Edge.

| Type | Number of Licenses |

|---|---|

| Edge Medium | 24 |

| Edge Large | 48 |

| Edge X‑Large | 96 |

The Distributed Firewall provides a software‑defined Layer 2–7 firewall designed to secure virtualized workloads, containers, and bare‑metal servers in a private cloud. It can be applied at each workload to segment east‑west traffic and prevent lateral movement of threats. To use the Distributed Firewall, the Service Option Distributed Firewall (DFW) must be selected. The number of required licenses is based on the size of the cluster in cores, and every core must be licensed.

Access to MPC Core

MPC Core provides multiple user interfaces for operations:

- VCF Operations

- VCF Operations for Logs

- NSX

- vCenter

- VCF Operations HCX (available only during migration)

For identity and access management, MPC Core includes an identity broker that must be connected to the customer’s Active Directory via secure LDAP. Users and groups from the customer Active Directory are used to assign permissions within MPC Core. Customer-defined groups are mapped to predefined roles as part of the fulfillment process. Changes to the permission model can be requested through a service request.

Permissions

MPC Core distinguishes three types of permission:

| Name | Description |

|---|---|

| Restricted | Only Equinix has access and determines the configuration required for service availability. |

| Limited | Only Equinix has access, but the customer can request parameter changes via a service request. |

| Free | Functions are available to the customer through the consoles. |

Application Integration

For some applications or integrations, access to the management environment is required. Common use cases include:

- Integration for backup (Veeam, CommVault, Rubrik)

- Integration for DR tooling (Zerto, Veeam Replication)

- Integration for VDI solutions (Omnissa, Citrix)

- Integration for automation CI/CD and Infrastructure as Code (Terraform, Ansible)

For application integrations, service accounts from the customer Active Directory are assigned to predefined roles in MPC Core.

Extension of the Service

Extending the MPC Core service can be achieved in several ways:

- Adding more of the same servers to the existing clusters

- Extending the number of clusters in the instance, up to a maximum of 5 clusters per installation

- Starting a new VCF instance

When a compute cluster requires strong separation of resources, a second instance can be created within the same fleet. The additional VCF instance includes its own management stack.

Service Description

Service Options

MPC Core includes several service options that are ordered separately.

Back‑up & Restore

Backup and restore of MPC resources are provided through the Managed Private Backup Service (ordered separately). The service includes backup of VM data or application data using a shared backup platform.

Software Licensing

A catalog of non‑licensed software ISOs for MPC is available. This catalog lists software that can be used in VMs running in an MPC Core deployment. Licensed software can be purchased through the Software Licensing product. After procurement, the ISOs will be made available in the catalog.

Customers may also bring their own software licenses. When using Bring Your Own License (BYOL), compliance with the software vendor’s license terms must be validated.

For all licensing, the customer is responsible for meeting the software vendor’s compliance requirements.

Support Plan

The support plan provides an optional service that covers additional service requests and other services such as extended support, additional reporting, and design support.

The Managed Solutions Premier Support Plan is a prepaid program that allows the purchase of monthly or annual (one‑time payment) blocks of support hours at a discount. Support hours are consumed in increments of fifteen (15) minutes.

Without a prepaid Managed Solutions Premier Support Plan, support is charged at the standard hourly rate under the Premier Support Service per hour (standard hourly rate). Support hours are calculated in increments of fifteen (15) minutes.

| PURCHASE UNIT | TYPE | CHARGE TYPE | UOM | ORDERING AND BILLING |

|---|---|---|---|---|

| Technical Support Plan | Monthly | Baseline | hour | Monthly reservation of hours for technical support |

| Technical Support Plan | Annual | Baseline | hour | Yearly reservation of hours for technical support |

The plan is not designated to one specific Managed Solutions product but applies to all Managed Solutions products purchased.

If all hours in the plan have been consumed, any additional hours will be charged at the standard hourly rate under the “Premier Support Service.”

Monthly or prepaid Managed Solutions Premier Support Plan hours do not roll over and are forfeited if not used. Usage beyond the pre‑purchased amount is billed at the regular “Premier Support Service” hourly rate unless an upgrade is requested.

The plan is country‑specific and cannot be linked to a specific IBX data center.

Migration Support

To migrate workloads to MPC Core from on‑premises environments, other colocation facilities, or public‑cloud‑based VMware platforms (such as AWS or OCVS), Equinix provides migration tooling that enables self‑service workload migration without refactoring applications. The tooling supports workloads running on vSphere, Hyper‑V, and other virtualization platforms by using multiple migration methods, including hypervisor‑level and in‑guest OS migration.

To enable migration for vSphere workloads, the customer receives a VCF Operations HCX appliance that is installed in the customer environment and paired with the MPC Core environment during configuration. The tooling supports asynchronous replication.

-

vMotion Migration - This method uses the VMware vMotion protocol to move a virtual machine to a remote site.

- Designed for moving a single virtual machine at a time

- Migrates the virtual machine state with no service interruption

- Requires 250 Mbps or higher throughput capability

- Latency < 150 ms

- Minimum MTU: 1150

- Maximum VMs per operation: 1

-

Cold Migration - This method uses the VMware NFC protocol and is automatically used when the source virtual machine is powered off.

- Requires 250 Mbps or higher throughput capability

- Latency < 150 ms

- Minimum MTU: 1150

- Maximum VMs per operation: 1

-

Bulk Migration - This method uses VMware vSphere Replication to move virtual machines to the destination site.

- Designed for moving multiple virtual machines in parallel

- Can be scheduled to complete at a predefined time

- The virtual machine runs at the source until failover begins (service interruption similar to a reboot)

- Requires 50 Mbps or higher throughput capability

- Latency < 150 ms

- Minimum MTU: 1150

- Supports up to 1000 concurrent migrations (dependent on HCX Manager appliance size)

-

Replication Assisted vMotion (RAV) - Replication Assisted vMotion combines advantages of Bulk Migration (parallel operations, resiliency, scheduling) with vMotion (zero‑downtime state migration).

- Requires 250 Mbps or higher throughput capability

- Latency < 150 ms

- Minimum MTU: 1150

- Supports up to 1000 concurrent migrations (dependent on HCX Manager appliance size)

Easy migration requires dedicated Fabric circuits or VLANs in a Cross Connect. After migration is complete, the migration tooling is removed.

Service Demarcation

The following shows the demarcation of responsibilities between the customer and Equinix.

| AREA | RESPONSIBILITY | DETAILS |

|---|---|---|

| Customer Data | Customer | Data governance and data access rights management |

| Account & Access Management | Customer | User account management, identity MFA/ID, multifactor authentication |

| Data Protection | Customer | Antivirus, client & server data encryption, network traffic encryption |

| Applications | Customer | Functional and technical application management |

| Databases | Customer | MySQL, Microsoft SQL, Oracle, PostgreSQL |

| Middleware | Customer | Enterprise Service Bus, Tomcat, .NET Framework |

| Operating System | Customer | Windows & Linux OS management |

| Compute Virtualization | Equinix Managed Solutions | VMware ESXi hypervisor, vCenter configuration |

| Network Virtualization | Equinix Managed Solutions | VMware NSX platform, NSX configuration (firewall, edges), connectivity |

| Compute | Equinix Managed Solutions | Dedicated compute resources |

| Storage | Equinix Managed Solutions | vSAN storage, vSAN configuration |

| Datacenter Connect | Equinix Managed Solutions | Redundant switches |

| Datacenter Facilities | Equinix Managed Solutions | Secure cabinet express, power, cooling, access control, physical security |

Purchase Units

The MPC service is charged monthly based on Baseline values or Baseline with Overage charge types.

- Baseline – The specific volume of the Unit of Measure (UOM) of the service as defined in the order.

- Overage – The quantity of the service consumed by the customer that exceeds the contracted Baseline volume.

Catalog of Purchase Units

| Category | Purchase Unit | UOM | Install Fee | Billing Method | Overage |

|---|---|---|---|---|---|

| MPC Service | Connectivity | Each | No | Baseline | No |

| MPC Compute | <Host Type> | Host | Yes | Baseline | No |

| MPC Service Option | Gateway Firewall <n> Size Edge | Edge | No | Baseline | No |

| Distributed Firewall <n> Cores | Host | No | Baseline | No |

- See Service Option Gateway Firewall for the number of cores

- The number of cores applies to the host used in the cluster

Roles and Responsibilities

The following outlines how responsibilities are divided between Equinix and the customer throughout service fulfillment, onboarding, and ongoing operations.

Tenant Provisioning

| ACTIVITIES | EQUINIX | CUSTOMER |

|---|---|---|

| Schedule / execute project kickoff meeting | RA | CI |

| Schedule / execute customer onboarding | RA | CI |

| Delivery of the hosts in a cluster according to the design blueprint and order | RAC | I¹ |

| Delivery of the storage capacity in accordance with design | RAC | I¹ |

| Delivery of the connectivity in accordance with the order | RAC | I¹ |

| Delivering agreed network functionality in accordance with design (optional) | RAC | C |

| Set up service accounts for selected applications | RAC | C |

| Delivery of the MPC Operational Console | RAC | I¹ |

| Delivery of user groups according to the customer | RAC | I¹ |

Acceptance Into Service

Once onboarding activities have been completed, testing will confirm that the product was delivered successfully and is ready for billing.

| ACTIVITIES | EQUINIX | CUSTOMER |

|---|---|---|

| Test access to MPC documentation on docs.equinix.com | CI | RA |

| Test access to the different consoles for the different groups | CI | RA |

| Confirm MPC fulfillment based on provided evidence | CI | RA |

| Check handover documentation | CI | RA |

| Set product as enabled for customer internal systems | RA | I |

Operational

Once the Managed Private Cloud service is enabled, the following operational items are addressed:

| ACTIVITIES | EQUINIX | CUSTOMER |

|---|---|---|

| Technical management of the service (overall) | RAC | I* |

| Patching and LCM of software: VCF Operations, vSphere, NSX, vSAN, HCX | RAC | I* |

| MPC infrastructure monitoring and maintenance | RA | I |

| Keep applications with integration into MPC compliant with MPC Core versions | I* | RAC |

| Functional management of the customer environment within the service (overall) | I¹ | RAC |

| Manage capacity of the compute and storage in the cluster | I¹ | RAC |

| Create, import and manage VMs and vApps | I¹ | RAC |

| Keep VM‑tooling up to date | I¹ | RAC |

| Scale VMs up and down | I¹ | RACI |

| Manage VM snapshots | RACI | |

| Manage access to VMs with console | RACI | |

| Request performance statistics | RACI | |

| Create and fill “Library” with customer’s own ISO/OVA files | RACI | |

| Separate or group VMs for availability or performance | I¹ | RAC |

| NFV: Virtual L2 networks | I¹ | RAC |

| NFV: Standard firewalling | I¹ | RAC |

| NFV: Routing (static) | I¹ | RAC |

| NFV: Routing (dynamic OSPF / BGP) | I¹ | RAC |

| NFV: NAT | I¹ | RAC |

| NFV: DHCP | I¹ | RAC |

| NFV: Load Balancing | I¹ | RAC |

| NFV: VPN (IPsec, Client) | I¹ | RAC |

| Setup and manage scripting & automation capabilities | RACI |

Note: RACI stands for Responsible, Accountable, Consulted and Informed.

I¹ Informing is only mandatory for tasks that have an impact on the functioning of the user environment.

I² Informing is only required for tasks that have an impact on the operation and/or management of the service.

Incident Management

Incident Management is included as part of service support. All incidents are handled based on priority. Priority is determined after an incident is reported and assessed by Equinix based on the information provided.

| Priority | Impact / Urgency | Description |

|---|---|---|

| P1 High | Unforeseen unavailability of a service or environment delivered and managed by Equinix, in accordance with the service description, due to a disruption. The customer cannot fulfill obligations towards its own users. The customer experiences direct demonstrable impact due to unavailability of this functionality. | The service must be restored immediately; production environments are unavailable with platform‑wide disruptions. |

| P2 Medium | The service does not offer full functionality, or operates with partial functionality or reduced performance, resulting in user impact. The customer experiences direct demonstrable impact due to limited availability of the functionality. | The service must be repaired the same working day; the management environment is not available. |

| P3 Low | The service functions with limited availability for one or more users, and a workaround is in place. | The repair timeline is determined in consultation with the reporting person. |

Note: This classification does not apply to disruptions that are, for example, caused by user-specific applications, actions by the user, or dependent on third parties. The incidents can be submitted through the Customer Portal under Managed Solutions. P1 incidents need to be submitted by phone.

Service Requests

Service requests are used to report service issues or to request assistance with implementation or configuration changes. Standard configuration changes can be requested through the MPC self‑service portal as a Service Request. Support is available 24×7×365. Two types of service requests are available:

- Included - Service requests that are within the scope of the service and do not incur additional charges.

- Additional - Service requests that are outside the scope of the service and incur additional charges.

| Request Name | Included / Additional |

|---|---|

| Create a new permission group | Included |

| Remove a permission group | Included |

| Add VLAN to MPC Core instance | Included |

| Delete a VLAN from MPC Core | Included |

| Create Edge Cluster | Additional |

| Delete Edge Cluster | Additional |

| Resize Edge Cluster | Additional |

Changes not listed above can be requested by selecting Change in the service request module. Equinix will perform an impact analysis to determine feasibility, cost, and lead time. Charges related to service requests are deducted from the Premier Support Plan balance. If the balance is insufficient, charges are invoiced at the prevailing rate. Requests that impact baseline capacity, ordered quantities, or any changes impacting the monthly service fee must be requested through the Equinix Sales team.

Reporting

As part of the service, customers receive monthly reporting covering tickets raised against SLA parameters and capacity per cluster.

Service Levels

The Service Level Agreement (SLA) defines measurable performance levels associated with the MPC service and specifies remedies if Equinix does not meet these levels. Service credits described in the Product Policy are the exclusive remedy for SLA breaches.

The Support SLA applies to incident registration and resolution.

| Priority | Response Time¹ | Resolution Time² | Execution of Work | SLA³ |

|---|---|---|---|---|

| P1 | < 30 min | < 4 hours | 24x7 | 95% |

| P2 | < 60 min | < 24 hours | 24x7 | 95% |

| P3 | < 120 min | < 5 days | 24x7 | 95% |

¹ Response time is measured from the moment a trouble ticket is submitted until an Equinix Managed Solutions specialist provides a formal response.

² Resolution time is measured from ticket creation to closure, cancellation, or handover to IBX Support.

³ SLA applies to the response time, details on the SLA can be found in the Product Policy.

The Availability Level for MPC service reflects availability of a cluster. The service is considered unavailable when infrastructure managed by Equinix causes the workload cluster to enter an error state that disrupts customer services.

| AVAILABILITY SERVICE LEVEL | DESCRIPTION |

|---|---|

| 99.95%+ | Less than 22 minutes of unavailability per calendar month |

Service credit terms for availability are documented in the Product Policy. Availability does not include data restore activities. When Managed Private Backup is contracted, customers can restore data via its operational console. When Managed Private Backup is not contracted, customers are responsible for restoring their own data.