Each Network Edge device loads out for the first time with a specific configuration. This configuration can vary significantly, depending on the vendor. If your device is not described, you might find some common elements from another vendor.

Initial Configuration

During device creation, a best practice configuration is applied for all initial global settings. In addition to the global settings that are common to all, local settings that define interfaces, layer-3 virtualization, access-lists and routes for remote reachability are applied.

This final configuration is a combination of:

- User-defined inputs through the portal or API

- Standard templates that lay the foundation for cloud connectivity

- Pre-loaded inventory items from our internal orchestration (such as unique IP addresses)

Although there is no practical effect or user impact at this time, Equinix deploys using an OpenStack environment, and uses the same tenant for multiple devices deployed by the same account in the same metro. In the future, this allows additional choices and interactions between the devices you have deployed.

Interfaces

Unless specifically mentioned otherwise, most devices boot up with at least 10 interfaces active, and some devices show these under the interfaces tab in device details.

Equinix has determined that most use cases won't require any more than this quantity, and we believe it's appropriate to normalize where we can. Contact Equinix operations if you are at risk of running out of available interfaces.

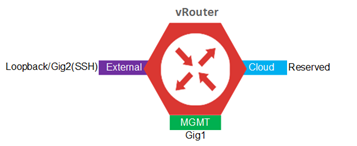

Devices have three interfaces instantiated by default:

- Management (MGMT)

- External/Public internet

- A reserved network interface

The MGMT interface is used internally by provisioning systems for monitoring and configuration management. SSH is used for secure access and the Public Internet interface is a loopback used for external reachability. The remaining interfaces are reserved for connectivity to cloud services. The Network interface is reserved for future use.

Network Edge utilizes Layer 3 virtualization for control and data plane isolation. The implementation method is vendor-specific -- consult the respective documentation for specifics.

For this example, we use VRF to describe Layer 3 virtualization. Your implementation might be different.

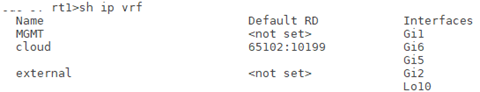

Three VRFs are created for virtual devices

- MGMT

- Cloud

- External

The MGMT interface is associated with the MGMT VRF. The SSH and Loopback interfaces are assigned to the external VRF, the remaining reserved interfaces are assigned to the cloud VRF.

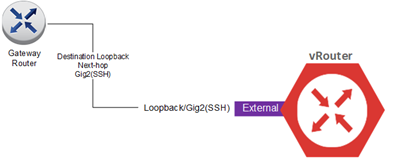

Each device comes equipped with a loopback interface that is created and placed in the external VRF and assigned an Equinix-owned public IP address during device creation. This interface is used for external connectivity requirements such as creating IPSec VPN tunnels and SSH access. External reachability to the virtual device is achieved by adding a host route on the gateway router that points the loopback address to the SSH interface on the virtual device as the next hop. The gateway router is part of the Equinix infrastructure for Network Edge and the routes are configured automatically as part of the provisioning process for virtual devices.

The MGT interface is placed in its own VRF and is used for management and provisioning activities.

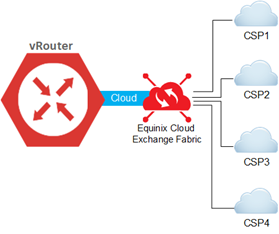

The remaining interfaces reserved for cloud connectivity are associated with the cloud VRF and connected to the Equinix Fabric. These interfaces can then be used for virtual connections to respective cloud service providers.

The remaining interfaces are there for user customization and some forthcoming features, such as dedicated NSP links, service chaining, and others to be announced. Equinix orchestration won't provision cloud services on these reserved interfaces.

As of the current release, there is no NAT, route filtering, or other configuration shortcuts in the portal or API, but customers can generally configure and activate these services, as needed, directly in the device. A few use cases are specified below as examples. Users should note, however, that the more they "customize" the device directly at the CLI by logging in through SSH, or with a 3rd party application or software orchestrator (as with SD-WAN), the more the device can be out of sync with the features and settings that the Equinix Network Edge portal tracks. It's the customer's responsibility to understand what has been customized and when a new setting from the portal, for example, might potentially "overwrite" what has been done outside of the portal. In short, any device configuration not done through the portal can be considered customized and all the caveats mentioned above are applicable. It's recommended to complete all configuration parameters exposed through the portal using the portal.

Layer 3 Configuration

Initial Layer 3 configuration to establish BGP connectivity should always be configured in the portal or your SaaS application. Local and remote ASN information with local and remote IP addressing are configured on the virtual device using the backend provisioning system once entered in the portal. You can add a BGP authentication key if you want.

Once the initial BGP configuration is complete and connectivity is established, you can manually configure other parameters as needed through your device CLI. Route-maps, default route generation, as-prepending and updating descriptions are some of the more common tasks.

Protocol Support

Each device has a wide diversity of protocols supported and the workflow to make them effective. Some have proprietary protocols only found in devices from a single vendor.

Equinix encounters significant differentiation in some devices. Equinix supports standard non-proprietary protocols. Proprietary Layer 3 (such as, EIGRP) and higher protocols would be supported when connecting between devices over the Equinix switching fabric as Equinix would be unaware of the higher layer protocols.

There are rare situations where the traffic patterns might disrupt Equinix Fabric or our Internet service, and so we occasionally disable or restrict the amount possible. One example of this is multicast traffic. While it's impossible to list every protocol supported, Equinix includes notable inclusions or exclusions where we believe it might be of interest to many users and their use cases in the tables at the end of this article.

Use Cases with Customized Configuration

A series of specific use cases are coming soon from our expert engineers and architects in the field. Each use case includes a summary of what it is, drawings, a statement of why, and a walk-through of what was done to build, customize, finalize, and test it in production.

- Use Case 1: Multi-cloud traffic directly between each other

- Use Case 2: Network Edge to NSP or colo

- Use Case 3: Backhaul of cloud traffic to a branch office with VPN tunnel

Device Specifications

Following is a list for each vendor and the devices and software packages currently supported. The initial boot-up specifications are included. We always recommend you consult with the vendor documentation for the most current, detailed, and accurate information about each VNF. A few notes:

- The interfaces note how many are included at the time of launch, and how many are possible per device. This doesn’t mean that all devices are usable immediately. Some are reserved for other purposes.

- The “max throughput average” is a statement of the sustained throughput that Equinix test labs were able to consistently achieve. Your results might differ, and each device comes with a pre-provisioned amount of throughput that is generally lower than the stated amounts. The sizing of small, medium and large should correspond with the Edge Instance that is allocated for your device at the time of launch

- The “boot time average” is a statement of Equinix test lab observations on a repeated and consistent basis across multiple scenarios and configurations. Your results might vary, and they are not the official stated times by the vendor

- Reserved interfaces and route domains are both stated in CLI terms that you see when logged into your device. You should NEVER delete or change these. They are noted purely to indicate what to expect

- Although many aspects of the starting configuration can be changed after it's launched with the CLI, always exercise caution before doing so, as Equinix can't guarantee stability and proper operations once the user begins customizing in CLI or other means. When in doubt, consult with Equinix operations or vendor guidance first